A model listed as Gemini 3.1 Flash Image appeared in Google's Vertex AI catalog this week, and early test images started circulating on X almost immediately. The model, widely referred to as Nano Banana 2, is built on Google's Flash architecture rather than the Pro tier that powers the current Nano Banana Pro (Gemini 3 Pro Image). That distinction matters: Flash models are designed for speed and cost, not quality leadership. And yet the leaked samples look suspiciously close to Pro output.

The leaks originated from @MarsForTech on X, who posted 4K test renders on February 24. One widely shared example shows the word "SUCCESS" rendered on a glass table with a mirrored reflection, the kind of physics-and-text combo that trips up most image generators. The reflection is correct. The text reversal is accurate. But the glass surface itself has visible banding artifacts, horizontal stripes that break the illusion at full resolution.

Flash pretending to be Pro

Here's what makes this interesting, and also what makes me skeptical. The original Nano Banana (based on Gemini 2.5 Flash Image) was a solid workhorse model that traded quality for speed. Nano Banana Pro, powered by Gemini 3 Pro, was the quality leap: native 2K output, 4K upscaling, and a multi-step rendering pipeline Google internally called GemPix 2. The gap between those two was visible and expected.

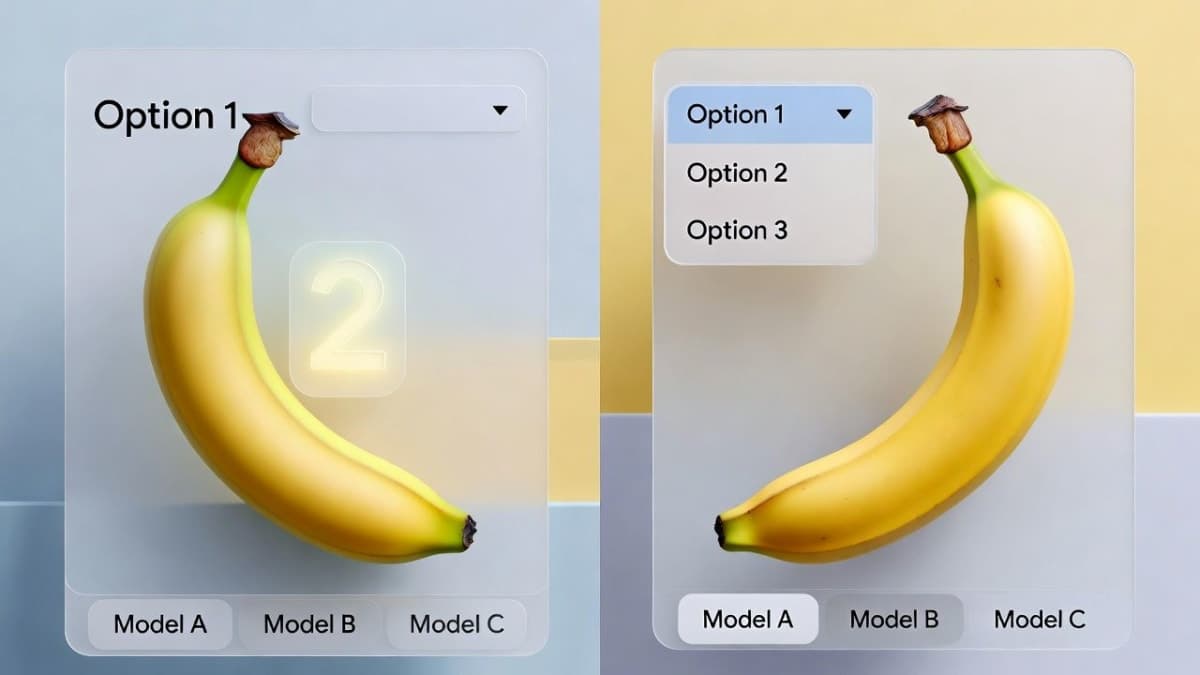

Nano Banana 2 is supposed to be the next Flash model. According to German tech outlet All-AI, early side-by-side comparisons with Nano Banana Pro show quality differences that are barely perceptible to the human eye. If true, that's a pricing story more than a quality story. Flash-tier API costs are substantially lower than Pro. Getting Pro-level output at Flash prices would matter for anyone running high-volume image pipelines, ad creative workflows, or feeding frames into video generation tools like Kling 3.0.

But "barely perceptible" from leaked screenshots on X is doing a lot of heavy lifting. We don't know the prompts used for comparison. We don't know if the Pro samples were cherry-picked to look worse or the Flash samples cherry-picked to look better. I'd want to see a proper blind evaluation before declaring price-quality parity.

The arena question

A model called "anon-bob-2" showed up on LMArena's Image Arena in battle mode around the same time. Users on X, including @chetaslua, identified it as Nano Banana 2 after running test prompts. One user reported accurate reflections across differently colored apples with correct text reversal. Impressive if you care about physics simulation in image gen. And most people testing these models do.

A Chinese-language forum post on LINUX DO offered a more cautious take. After extensive testing, the poster confirmed anon-bob-2 as Nano Banana 2 or the 3.1 Flash Image model, but noted that its reasoning and generalization capabilities were "significantly weakened" compared to the Pro version. Basic lateral-thinking visual puzzles stumped it. Comic creation prompts produced notably worse results than the Pro model or even earlier checkpoints. The core image generation quality held up, but the model's ability to think through complex compositions took a hit.

That's the tradeoff you'd expect from a Flash model, honestly. Strip out reasoning depth to gain speed. The question is whether the quality ceiling for straightforward prompts stays high enough to justify switching from Pro for most use cases.

What the glass table actually tells us

The "SUCCESS" reflection test is making the rounds as proof of Nano Banana 2's physics understanding. And yes, getting text reflection right on a glossy surface is hard. But the banding artifacts on the glass itself suggest the model is pattern-matching reflections rather than truly simulating them. A real glass table under studio lighting doesn't produce uniform horizontal stripes. That's a rendering shortcut.

The current Nano Banana Pro handles this same test reasonably well, according to the original source material. So the test proves Nano Banana 2 hasn't regressed on a task the Pro model already handles. That's not nothing, but it's not a generational leap either.

Midjourney V8 looming in the background

Google isn't operating in a vacuum here. Midjourney launched its final V8 rating party on February 20, which typically signals a public release within days. Founder David Holz has said V8 would ship before the end of February. The model runs on a completely rewritten codebase, supports native 2K resolution (up to 2048x2048 without upscaling tricks), and claims improved text rendering, which has been V7's weakest area.

But Midjourney and Nano Banana are solving different problems at this point. Midjourney produces gorgeous renders. Nobody disputes that. What it lacks is the LLM backbone that makes Gemini-based image models useful for editing, instruction following, and multi-image composition. You can't upload a photo to Midjourney and say "change the car color to blue while keeping the reflections consistent." Nano Banana Pro can do that. Midjourney V8 is shipping as a minimal viable product, with an Edit model expected sometime after launch. Until that lands, the comparison is aesthetic quality versus functional versatility, and those serve different audiences.

Google hasn't officially confirmed Nano Banana 2 or announced a release date. The Vertex AI catalog listing suggests it's close, and the appearance on LMArena's arena implies Google is already gathering preference data. An official announcement could come within days.