Moonshot AI researchers have a new paper on arXiv arguing that the prefill stage of LLM inference belongs in a different datacenter from decode. The technical report, posted April 16 by a team including Mooncake lead author Ruoyu Qin, claims a heterogeneous cross-cluster deployment can hit 54% higher throughput than a conventional prefill-decode disaggregated setup. It's a real result. The caveat buried inside it is bigger than the abstract lets on.

The number the paper almost hides

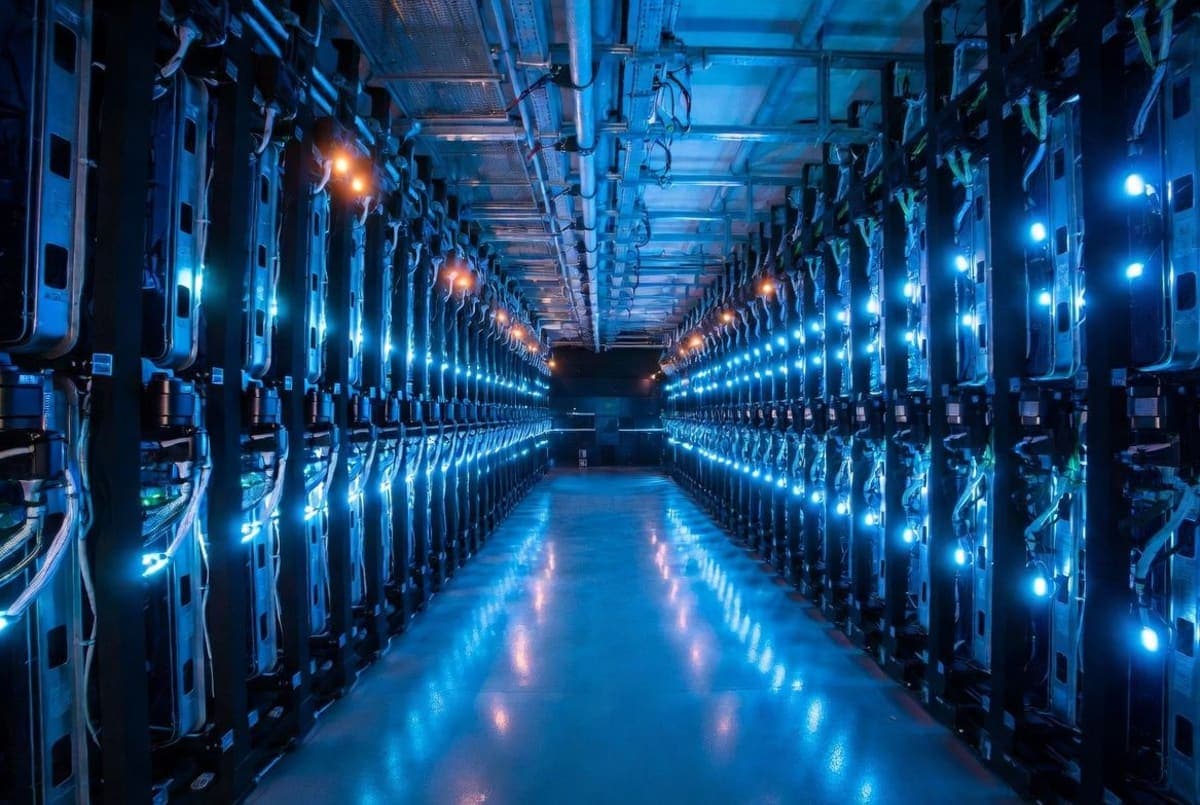

Here's the headline. On an internal 1T-parameter hybrid model, a PrfaaS deployment using 32 H200 GPUs for prefill plus 64 H20 GPUs for decode beat a homogeneous 96-H20 baseline by 54% on throughput and 64% on P90 time-to-first-token. Impressive numbers. But deep in section 4.4, after the results have been celebrated for several pages, there's this sentence: "at equal cost, the throughput gain is approximately 15%."

Fifteen percent isn't nothing. But it's a long way from fifty-four. The gap is that the PrfaaS config uses pricier H200s for prefill, and the baseline uses 96 cheaper H20s. When you normalize for dollars instead of chip count, most of the headline gap collapses. The paper doesn't show the math for the 15% number. I'd want to see it before quoting the larger figure without qualification.

Why hybrid attention changed the problem

The more interesting claim is architectural, not economic. Conventional prefill-decode disaggregation, like the Mooncake architecture Moonshot published in 2024, works inside a single cluster because the KV cache has to traverse RDMA fabric to avoid stalling decode. Dense attention models make that a hard constraint. The paper benchmarks MiniMax-M2.5 producing KV cache at roughly 60 Gbps per instance at 32K tokens, well beyond what cross-datacenter Ethernet can carry for a single machine.

Enter hybrid attention. Architectures like Kimi Linear, released by Moonshot in late October 2025, interleave a small number of full-attention layers with a larger number of linear-complexity ones (3:1 in Kimi Linear's case). The bounded-state layers don't emit length-dependent cache. Table 3 of the paper shows MiMo-V2-Flash producing 4.66 Gbps of KV traffic at 32K tokens versus 59.93 Gbps for MiniMax-M2.5, roughly a 13x reduction. Suddenly shipping KV cache over commodity Ethernet stops being absurd.

That's the real argument. Not that prefill should be remote, which Splitwise and DistServe made years ago inside single clusters. The argument is that KV cache reductions in recent model architectures specifically let you cross datacenter boundaries on regular networks, which in turn lets you source compute-dense prefill hardware that doesn't happen to sit in your decode facility.

What's actually new here?

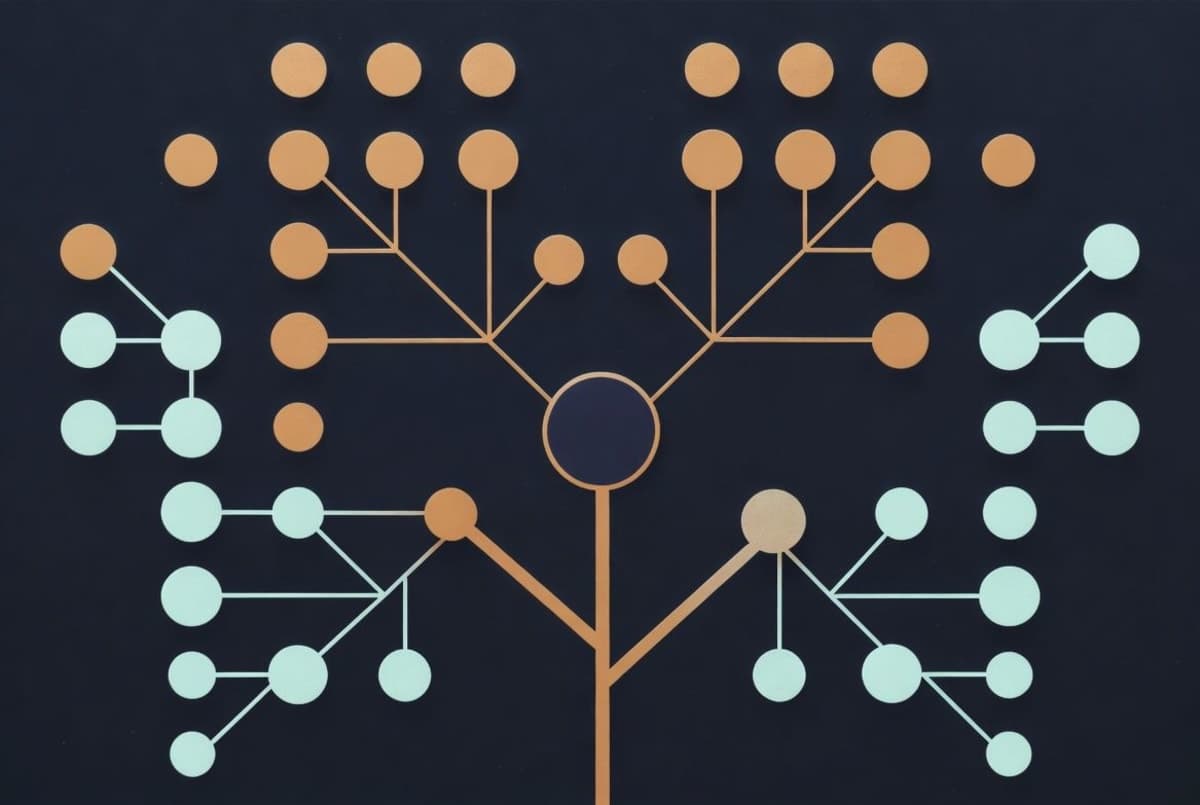

The scheduling work is the most detailed part of the paper. PrfaaS doesn't blindly externalize every prefill. It uses a length threshold (19.4K tokens in the case study) to decide which requests are worth the cross-cluster trip. Short requests stay local. Long ones get shipped. About half the requests in their workload cross the threshold, which lines up with a truncated log-normal input distribution averaging 27K tokens. Realistic? For their traffic, maybe. Whether that distribution matches your traffic is a different question.

The cross-cluster link in the case study runs at 100 Gbps with only 13 Gbps average utilization. That's a lot of headroom, and the authors flag it: the cluster is compute-bound, not bandwidth-bound. At IDC scale with "thousands of PrfaaS GPUs," they estimate 1 Tbps of aggregate egress. Still within reach of modern datacenter fabrics, but no longer comfortable.

They don't publish wall-clock latency for the KV transfer itself. How much TTFT gets eaten by sending 700 MiB of cache across a VPC link before decode can start? The paper mentions "layer-wise prefill pipelining" to overlap generation with transmission, but the actual end-to-end cost isn't broken out. That's the number I want to see.

The Rubin CPX framing

The paper leans heavily on the idea that hardware is already going phase-specialized. It cites NVIDIA's Rubin CPX, a GPU class built for compute-bound prefill, alongside Groq's LPUs as examples of chips designed for one phase or the other. The pitch writes itself: if you're going to buy specialized silicon anyway, why pretend it has to live in the same rack as the other specialized silicon?

Persuasive, but forward-looking. The case study uses H200s and H20s, both NVIDIA parts that already exist and typically can be found in the same facility. Whether operators actually end up with cleanly heterogeneous fleets that benefit from cross-datacenter routing remains speculation. That's not a criticism of the paper, which is explicit about the speculative framing. It's just the honest reading.

What to watch for

One case study. One internal model. One hardware pair.

The throughput model in section 3.4 is analytical, fed with profiling data rather than end-to-end measurements of a running production system. And the "naive heterogeneous PD" baseline, which PrfaaS beats by 32%, is a straw man by design: no scheduling, no length-based routing, no load balancing. Of course PrfaaS wins that race.

Still, the bandwidth math is the takeaway. If hybrid attention architectures continue eating market share from dense GQA models (the paper's Table 1 lists Kimi Linear, MiMo-V2-Flash, Qwen3.5, and Ring-2.5-1T as recent examples), the operational constraint that keeps prefill and decode locked to the same RDMA island gets weaker. Whether that leads to Prefill-as-a-Service specifically, or some other shape of disaggregation, is a separate question.

The model repos are public. Kimi Linear is on GitHub with vLLM integration. The PrfaaS scheduler code isn't mentioned as open source anywhere in the paper, which is worth noting if you're trying to reproduce these numbers.