Mustafa Suleyman, CEO of Microsoft AI, published an opinion piece in MIT Technology Review this week arguing that AI compute will continue its exponential growth for years to come. His central claim: another 1,000x increase in effective compute is plausible by the end of 2028.

That is a big number from a man whose employer is spending tens of billions on the infrastructure to make it happen. The piece reads less like analysis and more like a fundraising memo dressed up as futurism, but the underlying data is worth picking apart.

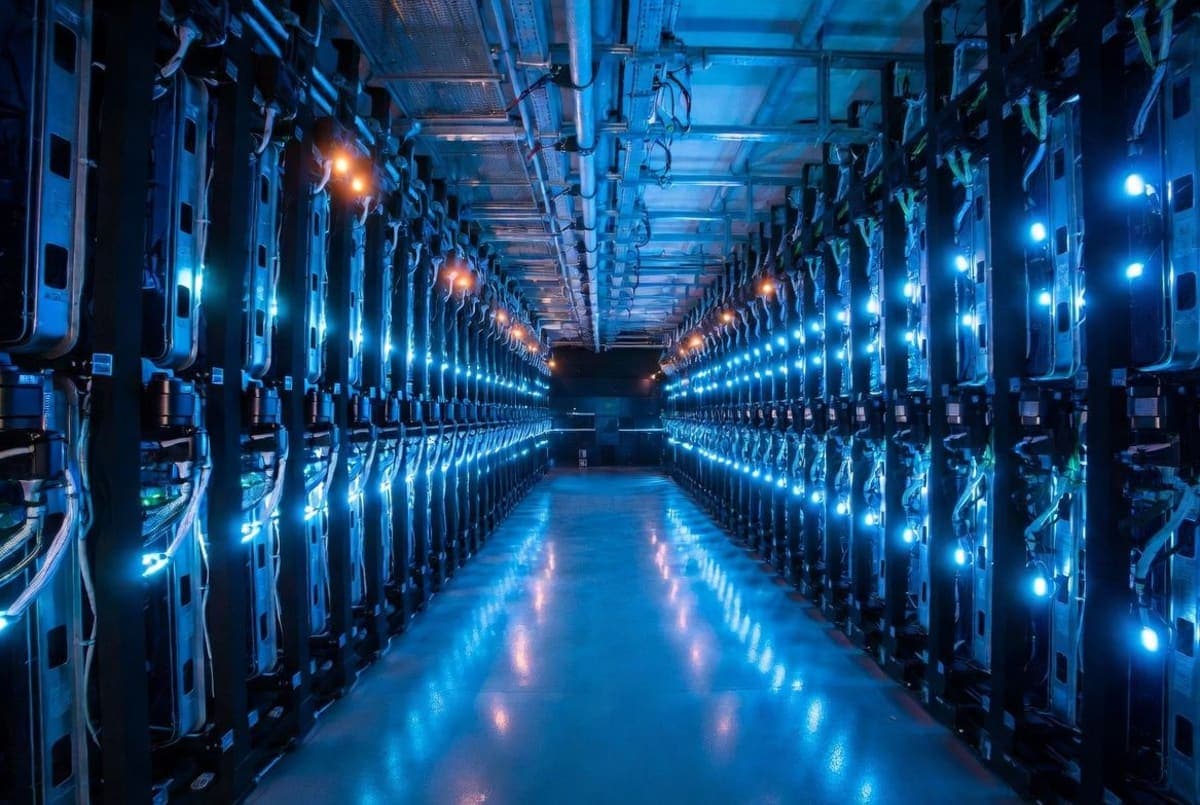

The hardware math

Suleyman's strongest case is in raw chip performance. Nvidia's datacenter GPUs went from 312 teraflops with the A100 in 2020 to 2,250 teraflops with today's Blackwell architecture. That's about a 7x jump in six years, which checks out against Nvidia's published specs. Pair that with HBM3 memory tripling bandwidth over its predecessor and interconnects like NVLink tying hundreds of thousands of GPUs together, and you get a training speedup of roughly 50x since 2020. Moore's Law alone would have predicted about 5x.

He also plugs Microsoft's own Maia 200 chip, launched in January, which he says delivers 30% better performance per dollar than other hardware in Microsoft's fleet. That claim comes straight from Microsoft's own blog, so take it accordingly. The Maia 200 is an inference chip, not a training chip, which Suleyman doesn't bother distinguishing.

Software is the quieter story

Research from Epoch AI suggests the compute needed to hit a fixed performance level halves roughly every eight months. Suleyman cites this alongside a claim that serving costs for some models have dropped by a factor of 900 on an annualized basis. The Epoch data is solid, though the 900x figure deserves scrutiny: Epoch's own dashboard notes inference cost declines vary wildly depending on which performance milestone you measure against, ranging from 9x to 900x per year. Suleyman picked the top of that range, which is the kind of thing CEOs do.

"The compute explosion is the technological story of our time, full stop," he writes, and then calls skeptics linear thinkers who keep getting surprised. Fair enough, but Suleyman is not a disinterested observer. He runs the division building the infrastructure he's promoting.

So where's the ceiling?

The 200-gigawatt figure that Suleyman projects for annual compute deployment by 2030 traces back to semiconductor analyst Dylan Patel of SemiAnalysis, who laid out the math on the Dwarkesh Podcast last month. Patel's reasoning: by 2030, ASML will have about 700 EUV lithography tools deployed globally, each supporting roughly 0.3 gigawatts of AI chip production. That gets you to around 200 GW, which Suleyman compares to the combined peak energy use of the UK, France, Germany, and Italy.

Patel himself is more cautious than Suleyman about what that number means. He points out that the real bottleneck by 2028 or 2029 won't be power or data centers but ASML's ability to manufacture enough lithography machines. "No one really sees demand for 200 gigawatts a year of AI chips," Patel said in the interview, noting the semiconductor supply chain is not, in his words, "AGI-pilled."

Suleyman waves away the energy constraint by citing solar cost declines of nearly 100x over 50 years and battery price drops of 97% over three decades. Both numbers come from Our World in Data and are accurate in isolation, but building gigawatt-scale solar and storage capacity fast enough to match datacenter construction timelines is a different problem entirely. The former Biden-era Energy Department director Jigar Shah has publicly pushed back on the idea that power scaling is easy, calling it naive.

The pitch underneath the essay

Strip away the MIT Technology Review byline and this is a positioning statement. Suleyman is telling investors, regulators, and competitors that Microsoft is building for a world of "cognitive abundance" where AI agents handle weeks-long projects, write code continuously, negotiate contracts, and manage logistics. He describes a shift from chatbots to "semiautonomous systems" without offering benchmarks, timelines, or evidence that current models can sustain reliable autonomous operation for hours, let alone weeks.

The compute growth numbers are real. Training compute for frontier models has been growing at 4-5x per year since 2020, according to Epoch AI's database of over 3,200 models. Global AI compute is expected to reach 100 million H100-equivalents by 2027. But whether more compute automatically translates into the agent capabilities Suleyman describes is a separate question he doesn't engage with. Researchers at Google DeepMind, including CEO Demis Hassabis, have noted that scaling alone is roughly a coin flip for reaching AGI-level performance, with architectural breakthroughs needed alongside raw compute.

Microsoft's next major AI infrastructure update is expected alongside its fiscal Q3 earnings later this month.