Google Research published a paper in TMLR on April 16, 2026 describing Simula, a synthetic data framework that generates training datasets from scratch with no seed examples. The framework is not actually new inside Google. It has already been training production models for months, including ShieldGemma, MedGemma, and the safety classifiers running on Gemini both on-device and server-side.

That retroactive reveal is the part worth paying attention to.

The reasoning-first pitch

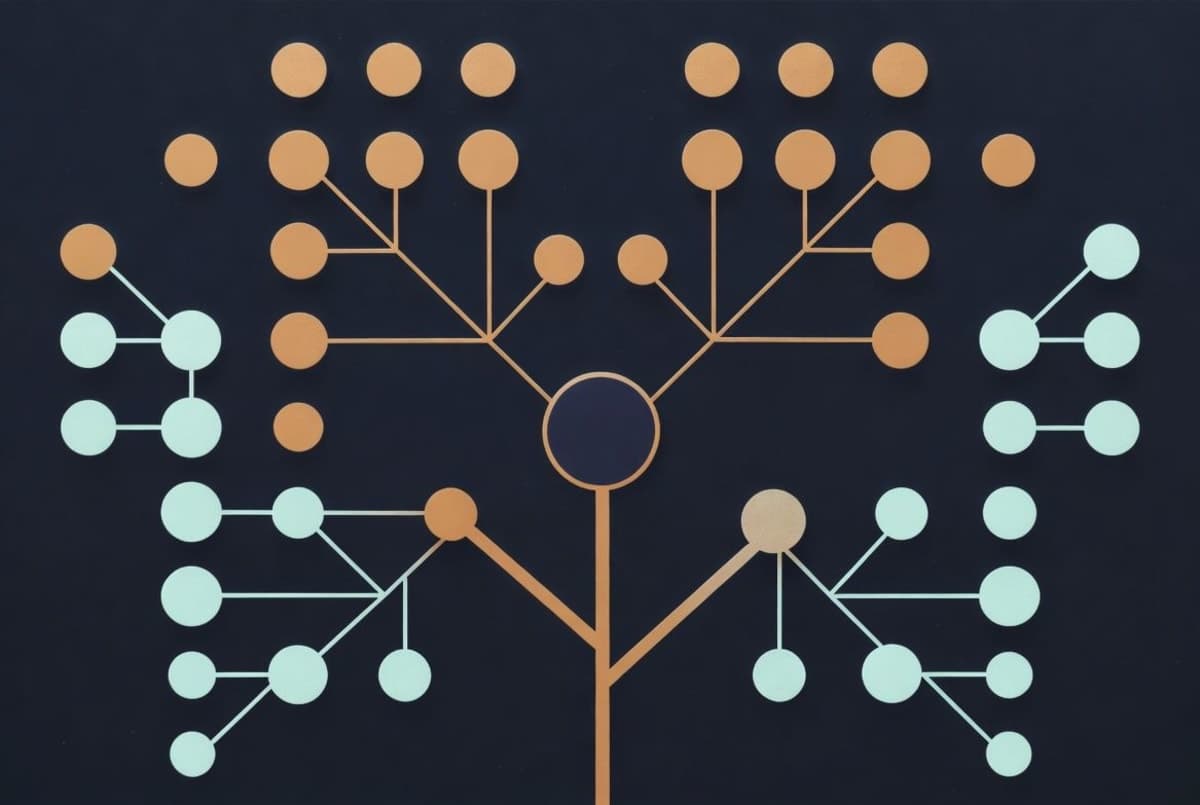

Simula skips the usual seed-data step entirely. A reasoning model takes a domain description, recursively builds a hierarchical taxonomy of sub-concepts, and samples nodes to produce "meta-prompts" (essentially scenario descriptions). A complexification pass optionally rewrites a configurable fraction to be harder. Two independent critic models then grade outputs, which the authors argue mitigates sycophancy when a single judge might rubber-stamp a plausible-sounding wrong answer.

The pitch, from the TMLR paper, is that this reframes synthetic data generation as mechanism design. Coverage, complexity, and quality become separate dials rather than a single tangled knob. The blog post describes Simula as "seedless and agentic," which in practice means you describe what domain you want and the reasoning model works out the taxonomy.

Sounds clean. In practice the results are messier, and to Google's credit the paper says so.

About those benchmarks

Google tested Simula across five domains using Gemini 2.5 Flash as a teacher and Gemma-3 4B as a student, generating up to 512,000 data points per dataset. The domains: cybersecurity via CTIBench, legal reasoning via LEXam, grade-school math via GSM8k, and multilingual knowledge via Global MMLU.

Here's where it gets interesting. High complexity helped math performance by roughly 10 percentage points on GSM8k. On LEXam, complexification hurt performance. Google frames this as "context is king," which is one way to put it. Another way: if your teacher model is weak in a domain, feeding the student harder problems makes things worse. That is a real limitation, not a feature, and it has implications for anyone planning to run Simula-style pipelines on domains their underlying model doesn't already handle well.

The benchmark choices also deserve a second look. CTIBench and LEXam are recent enough to limit contamination risk. GSM8k has been around since 2021 and any frontier model has almost certainly seen it during training. Whether Simula-generated data transfers to domains Google didn't publish results on, that's the open question, and the paper doesn't really answer it.

What's actually shipping

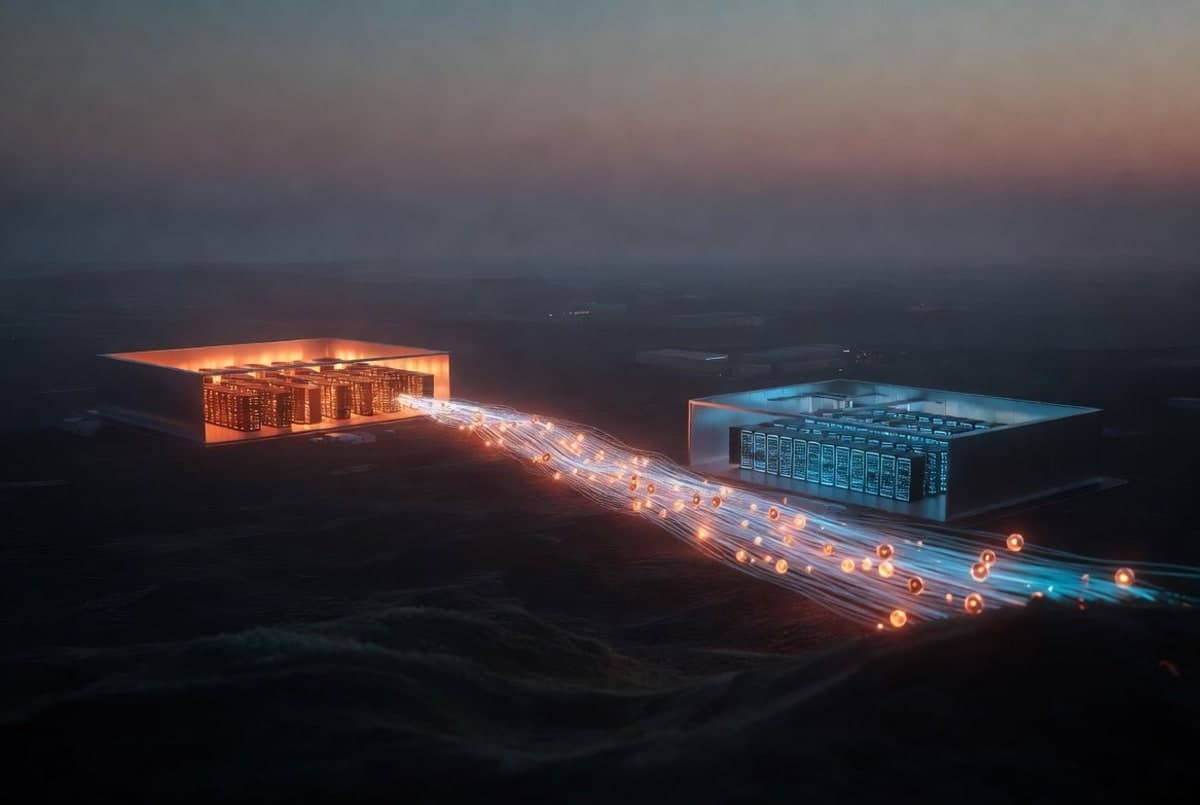

The deployment list is the most convincing part of this whole thing, and it's in the blog post rather than the TMLR submission. Simula-generated data trains ShieldGemma, FunctionGemma, and MedGemma. The same pipeline produces training data for Gemini's safety classifiers.

And it's behind scam detection for Android calls, plus spam filtering in Google Messages. Those aren't research demos. They're shipped features filtering live traffic for hundreds of millions of users, which is a much harder claim to argue with than a benchmark number.

I'd want more detail before calling any specific accuracy improvement definitive. But the deployment story carries its own weight.

The seedless question

"Seedless" deserves some pushback. Simula doesn't use domain-specific seed examples, granted. But the reasoning model building the taxonomy was trained on the internet. The whole pipeline inherits whatever biases, gaps, and blind spots the teacher model has. If Gemini 2.5 Flash is weak in a legal subdomain, Simula's taxonomy of that subdomain will be weak too. The LEXam result is basically that in action.

The paper acknowledges this obliquely. The broader question, though, of whether reasoning-first generation just moves the sampling problem from "where did you get the seeds" to "what does your teacher know," doesn't get a lot of airtime.

What comes next

The framework is published but not open-sourced. Google has not announced plans to release the code, and the paper treats implementation details like the exact taxonomy-expansion prompts as proprietary. The 30-page PDF on OpenReview is currently the most detailed public description available. Anyone outside Google who wants to test this will have to rebuild it.

For the Gemma ecosystem, the more practical question is whether future Gemma variants will ship the Simula-generated training data alongside the weights. So far, they haven't.