The Allen Institute for AI released MolmoWeb on Tuesday, an open-source browser agent that navigates websites by looking at screenshots rather than parsing HTML. It comes in two sizes, 4B and 8B parameters, both small enough to run locally, and ships under Apache 2.0 with the weights, training data, evaluation tools, and inference code. Training code is expected to follow.

The timing is conspicuous. MolmoWeb dropped the same day Microsoft confirmed it had hired Ai2's recently departed CEO Ali Farhadi and several key researchers, including Hanna Hajishirzi (who led the OLMo language model effort) and Ranjay Krishna (multimodal). All three are joining Mustafa Suleyman's Superintelligence team. Ai2 founding member Peter Clark is serving as interim CEO while the board searches for a permanent replacement.

How it actually works

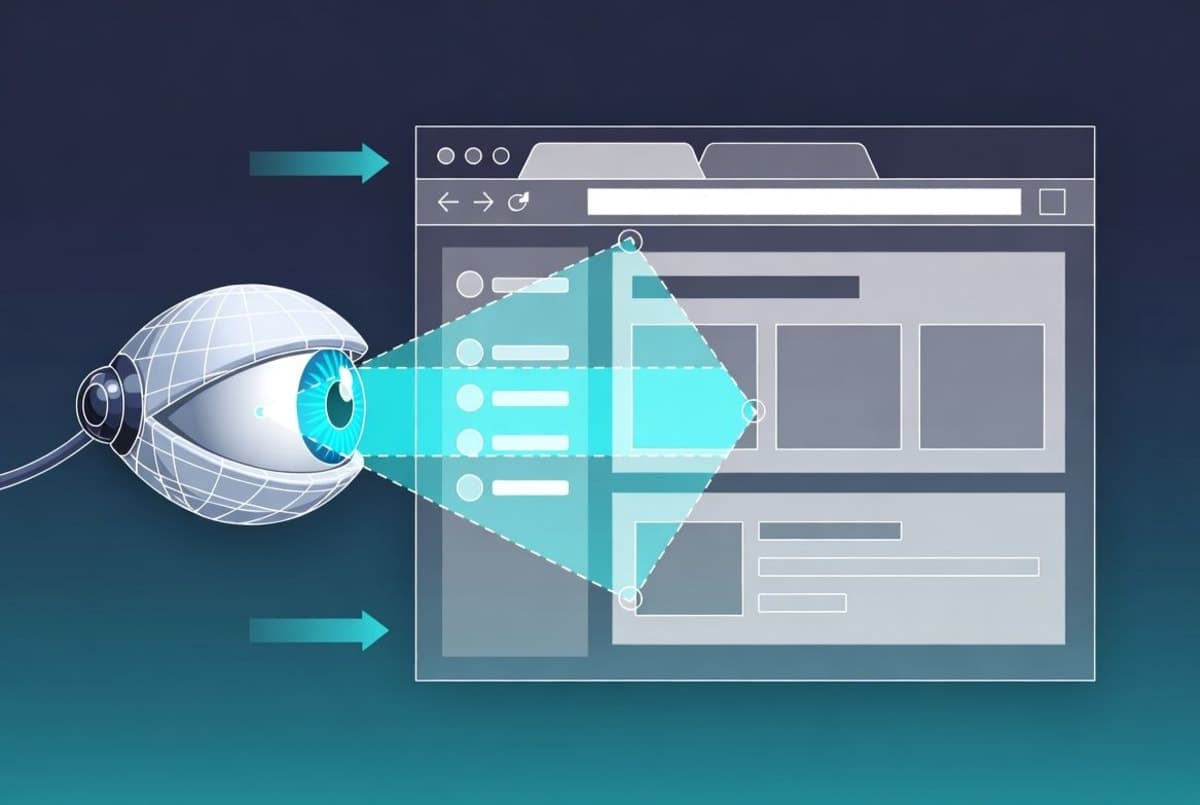

The loop is straightforward: MolmoWeb receives a task instruction and a screenshot, produces a short chain-of-thought reasoning step, then executes a browser action (click, type, scroll, navigate, switch tabs). Click locations are represented as normalized coordinates converted to pixels at execution time. No DOM parsing, no accessibility trees, no structured page representations. Just pixels.

That design choice is interesting for a couple of reasons. Screenshots are compact, often a single image versus tens of thousands of tokens for a serialized accessibility tree. And visual interfaces tend to be more stable than underlying page structures, which can change or get deliberately obfuscated by sites trying to block bots. Ai2 argues this also makes the agent's behavior easier to debug, since it reasons about the same interface a human sees.

But it introduces a different problem: the model occasionally struggles to read text from screenshots. Ai2 acknowledges this in the tech report, along with unreliable drag-and-drop and degraded performance on ambiguous instructions. These are honest disclosures, and rare enough in launch announcements that they're worth noting.

The benchmarks (and what they leave out)

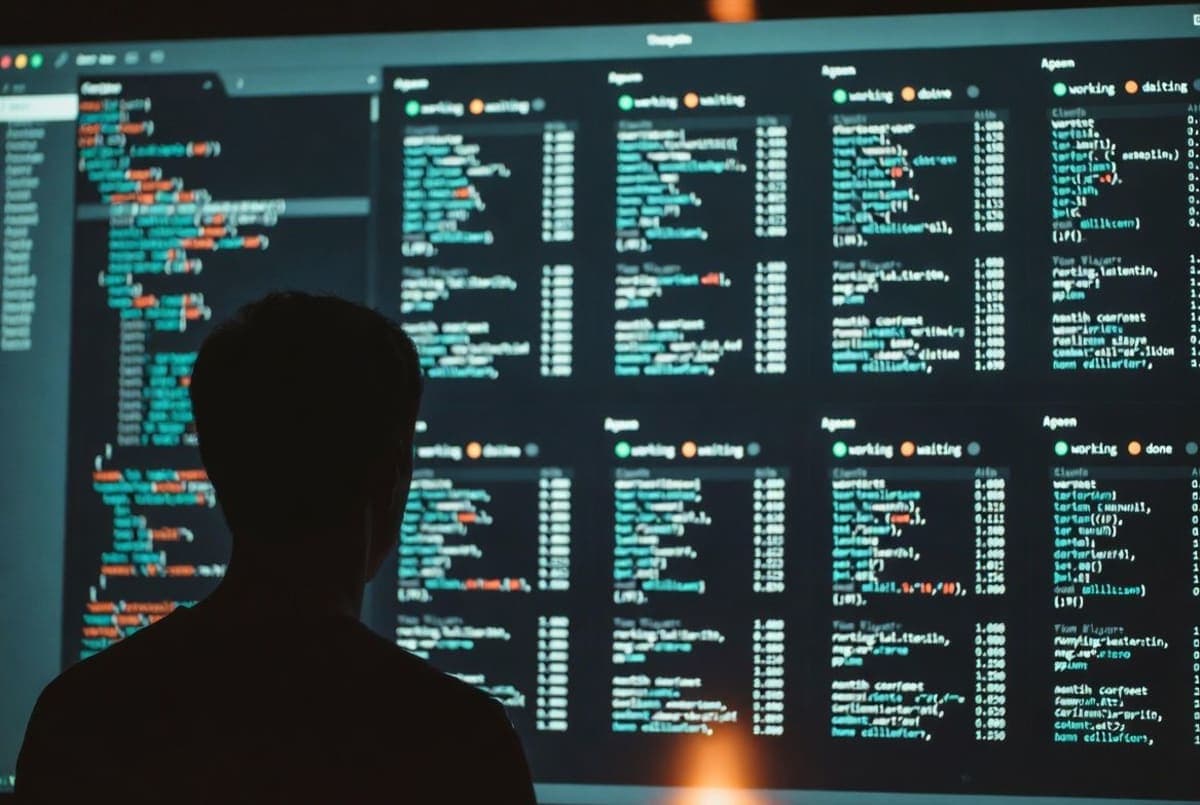

MolmoWeb was evaluated on four live-website benchmarks: WebVoyager, Online-Mind2Web, DeepShop, and WebTailBench. The 8B model scored 78.2% on WebVoyager, 42.3% on DeepShop, and 49.5% on WebTailBench, which Ai2 says makes it the top open-weight web agent across all four tests. It also beat agents built on GPT-4o that had access to both annotated screenshots and structured page data, which, given the parameter gap, is a genuinely surprising result.

On the grounding side, a dedicated 8B grounding model trained on MolmoWeb's data outperformed both Fara-7B and larger proprietary systems including Claude 3.7 and OpenAI CUA on the ScreenSpot benchmarks. The 4B model scored competitively while also handling full task completion, not just element localization.

Here is where I'd pump the brakes a bit. The GPT-4o comparison involves a now-retired model. Current proprietary agents from Anthropic, Google, and OpenAI still outperform MolmoWeb by a comfortable margin on most tasks. The New Stack put it well: Ai2's mission is less about beating frontier models and more about giving researchers an open foundation to build on. The benchmarks demonstrate competence, not dominance.

One number that did catch my eye: with test-time scaling (running multiple parallel rollouts and picking the best result), the 8B model reached 94.7% pass@4 on WebVoyager, up from 78.2% with a single attempt. That is a huge jump from throwing more inference compute at the problem, and it suggests the model has solid coverage even when individual runs fail.

MolmoWebMix

The dataset might matter more than the model itself. MolmoWebMix combines 30,000 human task trajectories (recorded via a custom Chrome extension across 1,100+ websites), synthetic trajectories generated by text-only accessibility-tree agents, and 2.2 million screenshot question-answer pairs for training visual grounding. Ai2 calls it the largest publicly released dataset of human web-task execution.

The synthetic data pipeline is the part that deserves scrutiny. The team specifically avoided distilling from proprietary vision-based agents, using text-only accessibility-tree agents instead. That is a meaningful choice: it means MolmoWeb's training data doesn't inherit whatever biases or capabilities are baked into OpenAI's or Anthropic's systems. But it also means the synthetic component is limited by what text-based agents can accomplish, which is a different (and probably lower) ceiling.

What this is really about

Tanmay Gupta, Ai2's senior research scientist, framed the use case in practical terms: running scheduled browser tasks, iterating queries at scale (like pulling h-index data for hundreds of authors), and chaining workflows where each step picks up from the last browser state. The model was explicitly not trained on tasks involving passwords, logins, or financial transactions.

The GitHub repo supports multiple backends (FastAPI, Modal, native checkpoints, HuggingFace Transformers) and uses Playwright for browser control. The model weights are on Hugging Face in both Transformers-compatible and Molmo-native formats. There is a hosted demo, though it only works with whitelisted websites.

The real question is whether the research community picks this up. Ai2 has structured MolmoWeb as a complete recipe, not just a model drop. Data collection tools, training pipeline, evaluation harness, inference library. For an academic group or startup that wants to build a specialized browser agent without paying per-API-call to OpenAI or Anthropic, this is the most complete open starting point available right now.

Whether Ai2 can sustain this kind of output with its leadership decamping to Microsoft is a separate, harder question. The institute says 2026 programs are fully funded, and it has a $152 million NSF-Nvidia initiative still running. But frontier AI research at a nonprofit, as Ai2 board chair Bill Hilf acknowledged to GeekWire, is getting harder to justify when the compute bills keep climbing. MolmoWeb might be one of the last projects to benefit from the old guard's momentum.