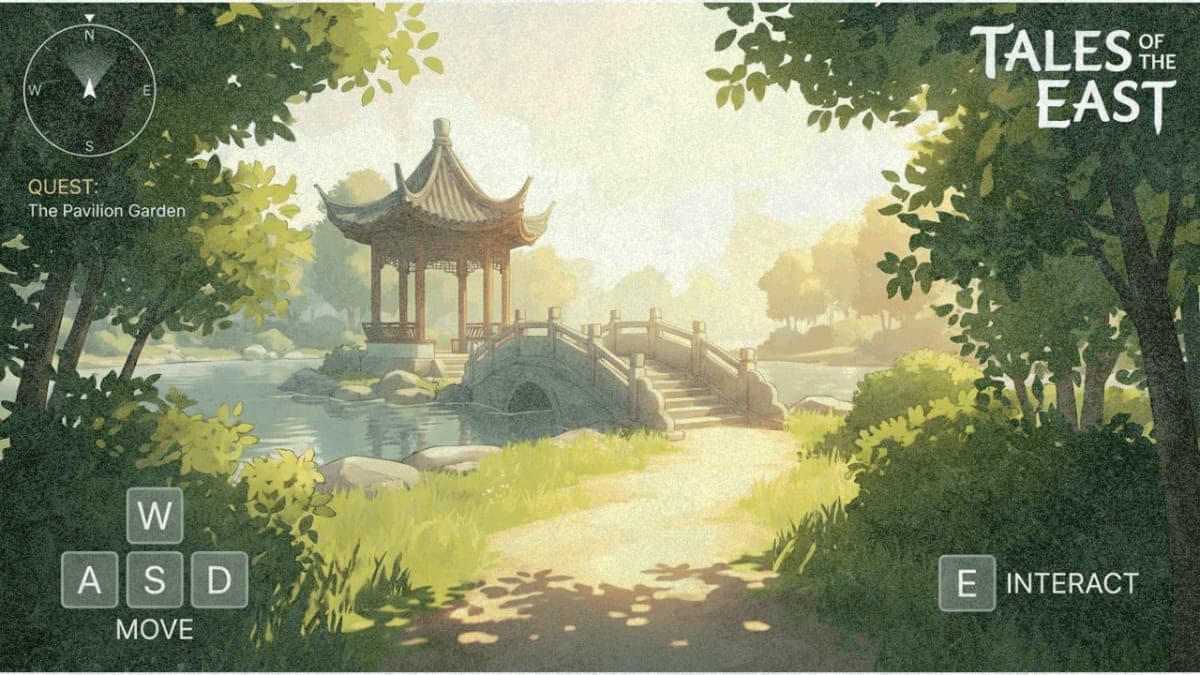

Tencent released HY World 1.5 (codenamed WorldPlay) on December 17, open-sourcing what it calls the most comprehensive real-time world model framework in the industry. The system generates explorable 3D environments from text or images, then lets users navigate them in real time using standard game controls.

The technical challenge here is the speed-memory tradeoff. Previous world models could generate impressive environments but required lengthy offline processing. WorldPlay claims to solve this with streaming video diffusion at 24 FPS while maintaining geometric consistency when you revisit areas. Leave a room, come back, and it remembers the layout.

Four techniques make this work: Dual Action Representation for keyboard/mouse input, Reconstituted Context Memory that dynamically rebuilds scene context from past frames, a reinforcement learning framework called WorldCompass, and Context Forcing for model distillation. The system generates in 16-frame chunks, roughly 0.67 seconds per chunk.

Tencent is releasing the full training pipeline: data processing, pre-training, RL post-training, and distillation. Three model variants are available on Hugging Face, built on HunyuanVideo-1.5. Minimum GPU requirement is 14GB VRAM with offloading enabled. An online demo is live at 3d.hunyuan.tencent.com for those who want to skip the setup.

The Bottom Line: Full training code is still pending, but the inference code and model weights are available now under Tencent's community license.

QUICK FACTS

- Generates streaming video at 24 FPS (company-reported)

- 16-frame chunks per generation cycle

- Minimum 14GB GPU VRAM with model offloading

- Three model variants: bidirectional, autoregressive, distilled

- Built on HunyuanVideo-1.5 base model

- Training code not yet released