Runway has launched GWM-1, a system that simulates environments frame by frame with an understanding of physics, geometry, and cause-effect relationships. Built on top of the company's Gen-4.5 video model, it runs in real time and responds to interactive inputs like camera movement, robot commands, and audio.

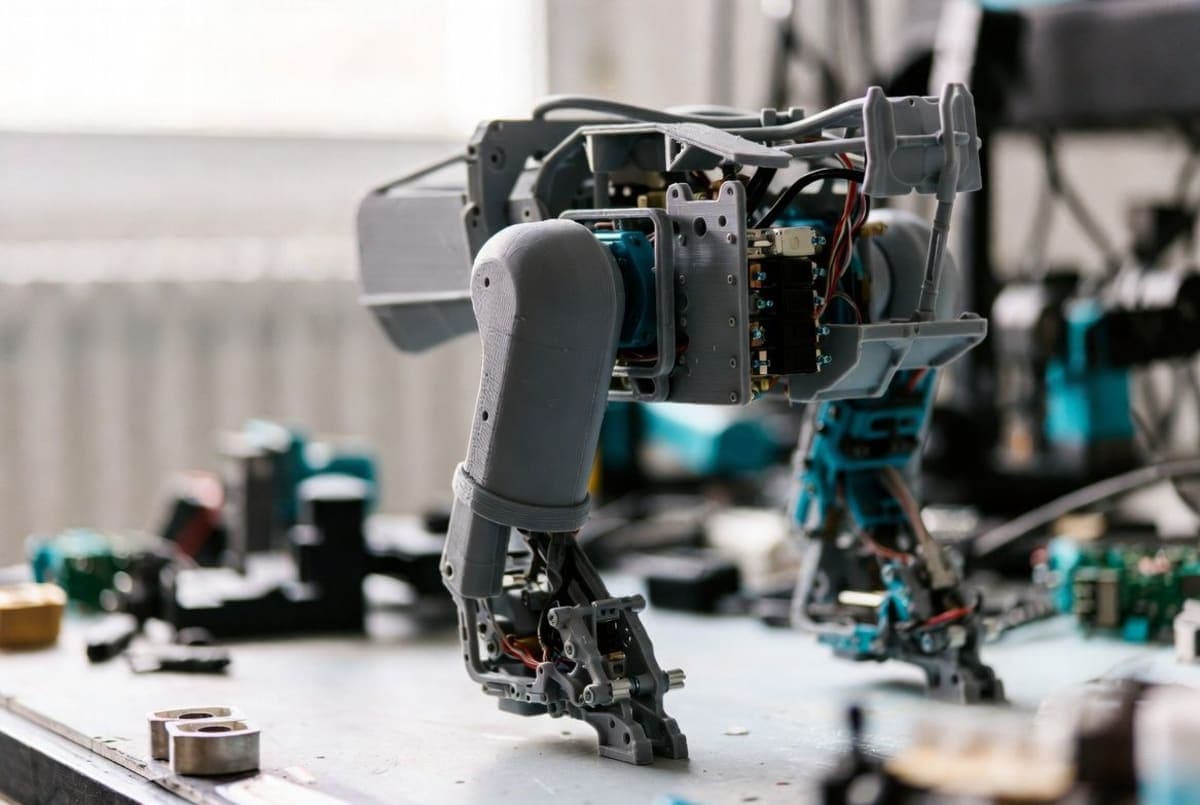

The release comes in three separate variants, which Runway says it plans to eventually merge into a single unified model. GWM Worlds generates explorable 3D environments from text or image prompts at 720p. GWM Robotics creates synthetic training data for robotic systems, letting developers test policy models in simulation rather than on physical hardware. GWM Avatars handles realistic human characters with lip-sync and expressions for extended conversations. That last one isn't available yet.

The robotics piece is where Runway seems most focused on commercial traction. A Python SDK is already available for GWM Robotics, and the company says it's in active talks with robotics firms and enterprises. The pitch: generate edge-case scenarios (weather changes, obstacles, policy violations) that would be expensive or dangerous to test in the real world.

Runway claims GWM-1 is more "general" than Google's Genie-3 and similar projects, though the three distinct post-trained models somewhat undercut that framing. The company is positioning this as a step beyond video generation and into simulation infrastructure for robotics, gaming, and AI agent training.

The Bottom Line: Runway ships its first world model with a robotics SDK ready for enterprise pilots, betting that synthetic training data is the near-term money.

QUICK FACTS

- Release date: December 11, 2025

- Three variants: GWM Worlds, GWM Robotics, GWM Avatars

- Resolution: 720p, up to 2 minutes of video

- Built on Gen-4.5 architecture

- Python SDK available for robotics variant

- GWM Avatars: "coming soon" (not yet launched)