NVIDIA kicked off CES 2026 last night with the formal launch of its Vera Rubin superchip platform, the successor to Blackwell that's been telegraphed for nearly a year now. The company is claiming 5x inference performance over Blackwell at one-tenth the token cost, though as always, the comparison is against its own previous generation rather than the competition.

What's actually in the box

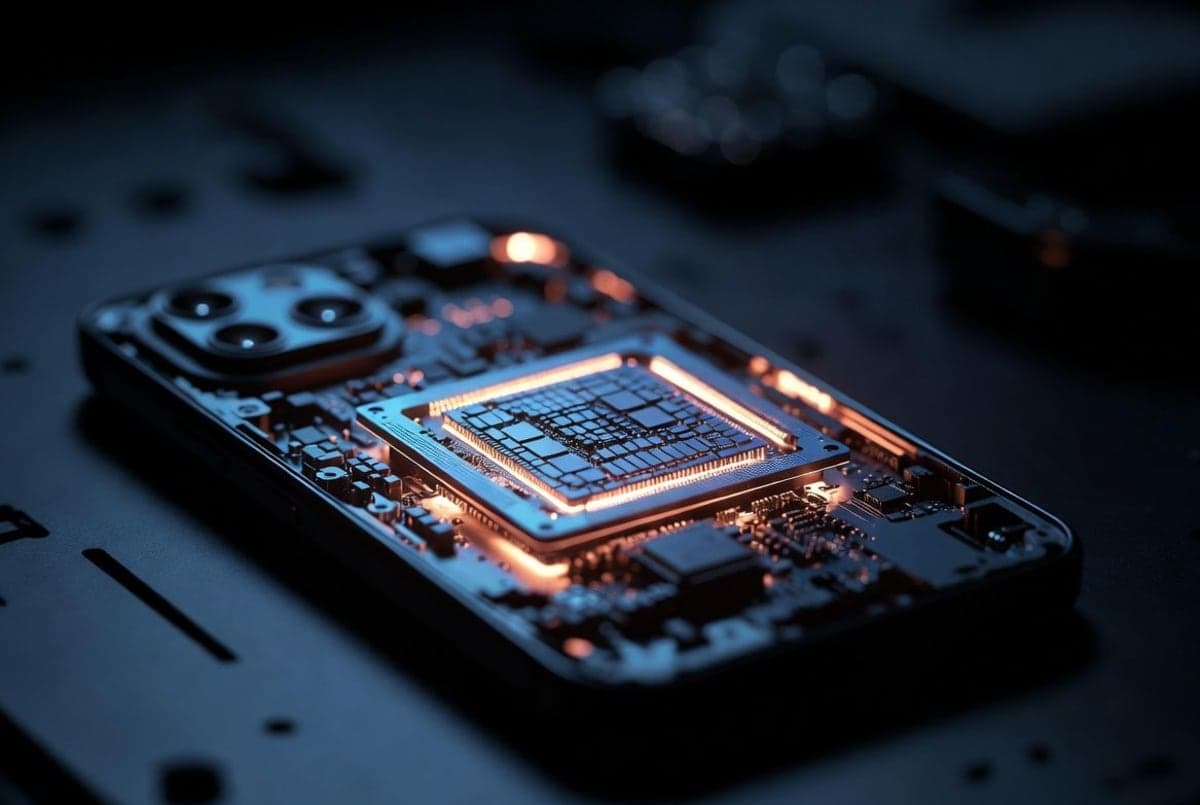

The Rubin platform comprises six chips designed to work together: the Vera CPU, Rubin GPU, NVLink 6 switch, ConnectX-9 SuperNIC, BlueField-4 data processing unit, and Spectrum-6 Ethernet switch. NVIDIA is calling this "extreme codesign," which is marketing speak for "we control the whole stack."

The Vera Rubin superchip pairs one Vera CPU with two Rubin GPUs. Each dual-die Rubin GPU churns out 50 petaFLOPS for inference and 35 petaFLOPS for training, using the NVFP4 data type. The memory situation is interesting: 288 GB of HBM4 per GPU socket, delivering 22 TB/s of bandwidth, which is 2.8x faster than Blackwell. NVIDIA initially targeted 13 TB/s when it teased Rubin last year. The jump to 22 TB/s came from silicon improvements, not compression tricks. That's a notable upgrade, though it also makes you wonder what else might shift before these actually ship.

The flagship system is the Vera Rubin NVL72, combining 72 GPUs into a single rack-scale system. Jensen cracked a joke about the weight that apparently didn't land well. More usefully: building the chassis now takes five minutes instead of two hours. Progress.

The numbers game

Here's what NVIDIA is claiming against Blackwell:

- 5x inference performance (NVFP4)

- 3.5x training performance

- 2.8x memory bandwidth

- 10x reduction in inference token costs

- 4x reduction in GPUs needed to train the same mixture-of-experts model

That last one matters. If you need fewer GPUs to train the same model, you can redeploy the excess. Or just train bigger models. Either way, it's the efficiency argument that hyperscalers actually care about.

AWS, Google Cloud, Microsoft, and Oracle will be among the first to deploy Vera Rubin instances in the second half of 2026. Microsoft specifically mentioned deploying these in its Fairwater AI superfactory sites. CoreWeave, Lambda, Nebius, and Nscale are also on the early list.

And then there's Alpamayo

The second big announcement was Alpamayo, NVIDIA's new family of open-source models for autonomous vehicle development. This is where things get genuinely interesting.

At the core is Alpamayo 1, a 10 billion-parameter chain-of-thought vision language action model that lets AVs reason through complex edge cases. The idea is that instead of just pattern-matching, the vehicle can break down problems step by step and explain its decisions. "Not only does it take sensor input and activate steering wheel, brakes, and acceleration, it also reasons about what action it's about to take," Huang said during the keynote.

The model weights are available on Hugging Face. There's also AlpaSim, an open-source simulation framework on GitHub, and 1,700+ hours of driving data covering edge cases. The dataset includes footage from 25 countries and over 2,500 cities.

JLR, Lucid, Uber, and Berkeley DeepDrive are already onboard. Mercedes-Benz is putting Alpamayo into the new CLA, with AI-defined driving coming to the US this year. Bold claim. We'll see.

Why open-source? Huang noted that 80% of startups are building on open models. NVIDIA wants to be the infrastructure layer whether you're paying for their chips or training on their models. Convenient positioning.

The AMD-shaped elephant

NVIDIA didn't mention AMD by name, but the timing tells a story. AMD's Helios rack-scale platform, announced at OCP last fall, promises performance on par with Vera Rubin NVL72 while offering 50% more HBM4 memory. That's AMD's claim, anyway.

Helios is built on the Open Rack Wide standard co-developed with Meta, making it attractive to hyperscalers wary of vendor lock-in. Oracle has already committed to deploying 50,000 MI450 GPUs starting in Q3 2026. The first Helios systems are expected around the same time as Vera Rubin.

On paper, Helios targets 2.9 exaFLOPS of FP4 inference versus NVIDIA's claimed 3.6 exaFLOPS. Close enough to matter. AMD hasn't disclosed power targets though, and in a world where data centers are power-constrained, efficiency might matter more than peak FLOPS.

What NVIDIA isn't saying

A few things were conspicuously absent. No consumer GPUs at CES this year, which used to be the whole point. No specifics on Rubin pricing. And while NVIDIA said Rubin is "in full production," that means test chips are back from the fab and performing well. The actual ramp to volume production doesn't start until second half 2026.

The company also introduced new AI-native storage (Inference Context Memory Storage) and silicon photonics switches, which are legitimately impressive but buried in the announcement. The storage platform promises 5x higher tokens per second for long-context inference. If you're running trillion-parameter models with extended context windows, this matters more than another GPU spec bump.

So where does this leave things

NVIDIA remains the default choice for anyone who needs AI infrastructure yesterday. The annual cadence is relentless, and even if AMD catches up on specs, the software ecosystem gap is real. But the gap is narrowing. AMD's open-standards play with Meta and HPE gives hyperscalers an actual alternative for the first time.

Rubin systems should start hitting data centers in the back half of 2026. The Mercedes CLA with Alpamayo is supposedly coming to US roads this year.