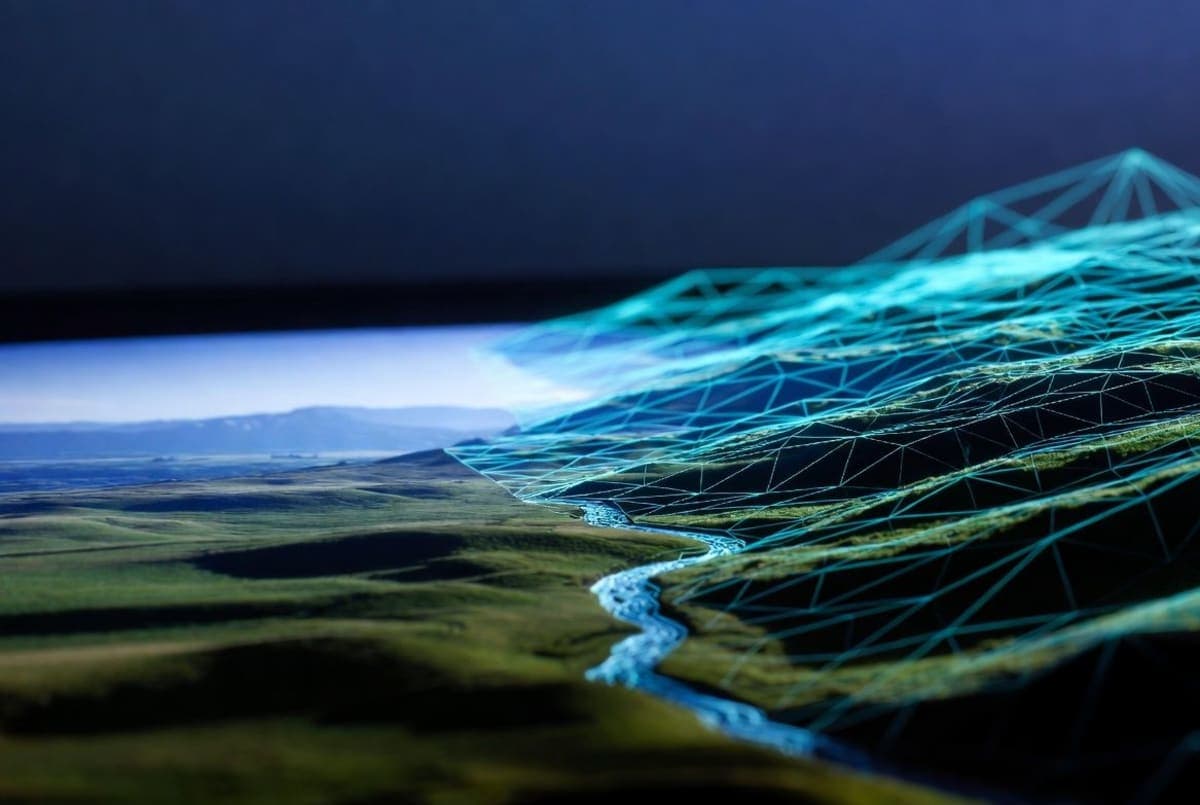

NVIDIA's Spatial Intelligence Lab released Lyra 2.0, a framework that converts a single photograph into a navigable, geometrically consistent 3D world. The research paper dropped April 14, with model weights and source code available under Apache 2.0. Commercial use is permitted.

The core problem: AI video models lose track of what a scene looks like over long camera trajectories. Walk far enough, turn around, and the room you started in has warped beyond recognition. Two specific failure modes cause this. Spatial forgetting, where previously seen regions fall outside the model's context window and get hallucinated on revisit. And temporal drifting, where small errors compound frame over frame until the whole scene degrades. Lyra 2.0 tackles the first by caching per-frame 3D geometry to retrieve past views and establish spatial correspondences. For drift, it trains on its own degraded outputs, learning to correct accumulated errors rather than amplify them.

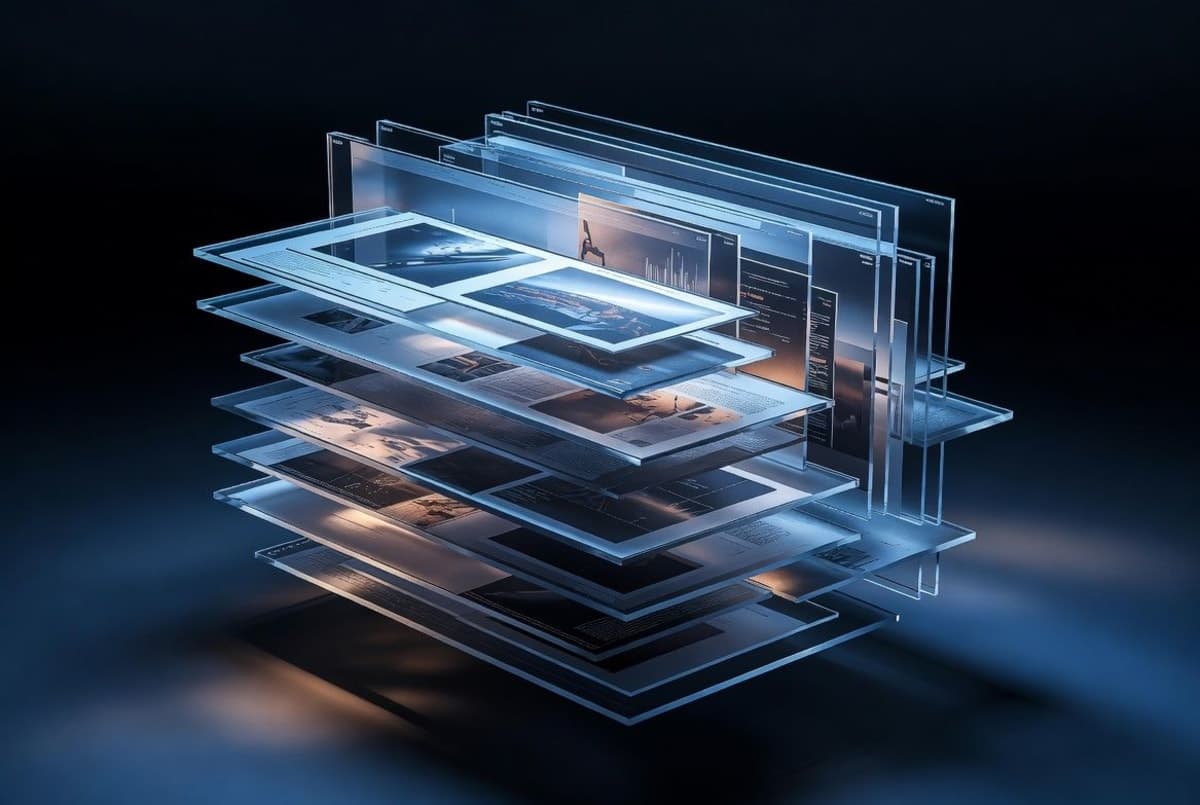

Built on Wan 2.1-14B (a diffusion transformer for video generation), the system produces frames at 832x480 resolution in 35 denoising steps. A distilled variant cuts that to 4 steps. Outputs come as 3D Gaussian Splats and surface meshes that plug directly into real-time rendering engines. NVIDIA's project page shows exports into Isaac Sim for robotics training, where a delivery robot can train in a simulated facility built from a single photo of the real space.

On DL3DV and Tanks and Temples benchmarks, NVIDIA reports an LPIPS of 0.552, FID of 51.33, and style consistency of 85.07%. These are company-reported numbers with no independent verification yet. Lyra 1.0 shipped in September 2025 with the core single-image-to-3D pipeline; this release focuses on scaling to larger, more complex environments. No managed inference endpoint exists at launch. You'll need your own GPU hardware, and NVIDIA says it's only been tested on H100 and A100 cards.

Bottom Line

Lyra 2.0 ships under Apache 2.0 with weights on Hugging Face, but requires H100/A100 GPUs with at least 43GB VRAM to run locally.

Quick Facts

- License: Apache 2.0, commercial use permitted

- Base model: Wan 2.1-14B diffusion transformer

- Resolution: 832x480, 35 denoising steps (4 steps distilled)

- Benchmarks (company-reported): LPIPS 0.552, FID 51.33, style consistency 85.07%

- Hardware: tested on H100/A100 only, ~43GB VRAM with full offloading