QUICK INFO

| Difficulty | Intermediate |

| Time Required | 60-80 hours (full curriculum) |

| Prerequisites | Python programming, basic linear algebra, NumPy fundamentals |

| Tools Needed | Python 3.8+, Jupyter Lab, Git |

What You'll Learn:

- Build ML framework components from scratch (tensors, autograd, optimizers)

- Understand production ML systems architecture

- Deploy models to edge devices (Arduino, Raspberry Pi)

This guide walks you through MLSysBook, an open-source textbook from Harvard's CS249r course. It's different from most ML resources because the focus is infrastructure, not algorithms. You won't just train models. You'll build the systems that make training possible.

The textbook covers data engineering, model optimization, deployment, and edge computing across 19 chapters. There's also TinyTorch, a companion framework where you implement autograd, attention mechanisms, and training loops yourself. I'll cover the textbook structure, how TinyTorch works, and a realistic study path.

Getting Started

Grab the materials first. You can read online at mlsysbook.ai, but I recommend downloading the PDF for offline annotation:

# Download the PDF (roughly 500 pages)

curl -O https://mlsysbook.ai/pdf

# Or EPUB if you prefer

curl -O https://mlsysbook.ai/epub

For TinyTorch, clone the repository:

curl -sSL mlsysbook.ai/tinytorch/install.sh | bash

cd tinytorch

source .venv/bin/activate

tito setup

The tito command is their CLI tool. It tracks module progress and runs validation tests.

One thing that confused me initially: the textbook and TinyTorch are separate but complementary. The textbook covers concepts. TinyTorch has you implement them. You could do either independently, but they're designed to work together.

Textbook Structure

The book organizes into six parts. I'll focus on what each actually contains rather than the marketing descriptions.

Part I: Foundations covers what makes ML systems different from traditional software. Chapter 1 (Introduction) is worth reading even if you skip ahead, since it establishes vocabulary the rest of the book uses. Chapter 2 (ML Systems) gets into architectural patterns. If you've worked with production ML, some of this will feel familiar.

Part II: Core ML has deep learning fundamentals and the training workflow. Nothing groundbreaking here if you've taken an ML course, but Chapter 4 (AI Workflow) is useful for understanding how the textbook frames the lifecycle.

Part III: Performance is where things get interesting. The chapters on efficient AI, model optimization, and acceleration cover quantization, pruning, knowledge distillation. Chapter 12 (Benchmarking) introduces MLPerf and standardized evaluation. Most ML courses skip this material entirely.

Part IV: Deployment handles on-device learning and embedded MLOps. This is edge computing territory, covering microcontrollers and resource-constrained environments.

Part V: Responsible AI addresses security, privacy, sustainability. Chapter 15 (Sustainable AI) discusses training compute costs, which is increasingly relevant given the scale of modern models.

Part VI: Frontiers covers generative AI and robust systems. These chapters get updated more frequently than the foundational content.

Start with Chapter 1, skim Chapter 12 (Benchmarking) to understand evaluation methodology, then pick a path based on your interests. If you're coming from a pure ML background, Part III will probably fill the biggest gaps.

TinyTorch: Build the Framework Yourself

TinyTorch is the hands-on component. It's organized into 20 modules, each building on previous ones. The idea is that by the end, every import statement pulls from code you wrote.

The modules follow a progression:

Foundation (Modules 01-07): Tensor operations, activations, layers, losses, autograd, optimizers, training loops. This is roughly weeks 1-4 at a 4-6 hour/week pace. Module 05 (Autograd) is the inflection point where things click together. Before that, you're building isolated components. After, they start working as a system.

Architecture (Modules 08-13): DataLoader, convolutions, tokenization, embeddings, attention, transformers. This is where you build CNN and transformer architectures using your foundation code.

Optimization (Modules 14-19): Profiling, quantization, compression, acceleration, benchmarking. These modules connect to Part III of the textbook.

Capstone (Module 20): Integration project where you train a complete model on CIFAR-10 or similar.

To start a module:

tito module start 01_tensor

This opens the development notebook. Each module has an ABOUT.md file explaining concepts and a README.md with implementation guidance.

The modules are designed so that incomplete implementations don't break later modules. Module 03 (Layers) works even before you've built autograd. Once you finish Module 05, gradient computation activates automatically.

Study Path: 8-Week Intensive

Here's a realistic schedule if you're treating this like a self-paced course:

Weeks 1-2: Read Chapters 1-2, complete Modules 01-03 (Tensor, Activations, Layers). At this point you can build forward passes but nothing trains yet.

Weeks 3-4: Read Chapter 4 (AI Workflow), complete Modules 04-07 (Losses, Autograd, Optimizers, Training). You'll unlock the first milestone: training a perceptron with your own code.

Weeks 5-6: Read Chapters 7-10, complete Modules 08-09 (DataLoader, Spatial). You're now working with CNNs.

Weeks 7-8: Module 12-13 (Attention, Transformers) or Modules 14-16 (Optimization track) depending on interest.

The TinyTorch paper estimates 60-80 hours total. That's optimistic if you're also reading the textbook deeply, but reasonable if you're focused primarily on implementation.

I should mention: TinyTorch is still being refined. The documentation notes it's functional but "expect rough edges." I'd verify setup works before committing to the full curriculum.

Running Milestones

TinyTorch has a milestone system where you recreate historical ML achievements using your implementations. After completing Modules 01-03, you can run:

tito milestone run 01

This runs the 1957 Perceptron milestone. The output shows which of your modules are being used:

Starting Milestone 01...

Assembling perceptron with YOUR TinyTorch modules...

* Linear layer: 2 -> 1 (YOUR Module 03!)

* Activation: Sigmoid (YOUR Module 02!)

Later milestones include the XOR Crisis (1969), MLP (1986), CNN Revolution (1998), and Transformer Era (2017). Module 19 benchmarks against MLPerf.

Hardware Labs

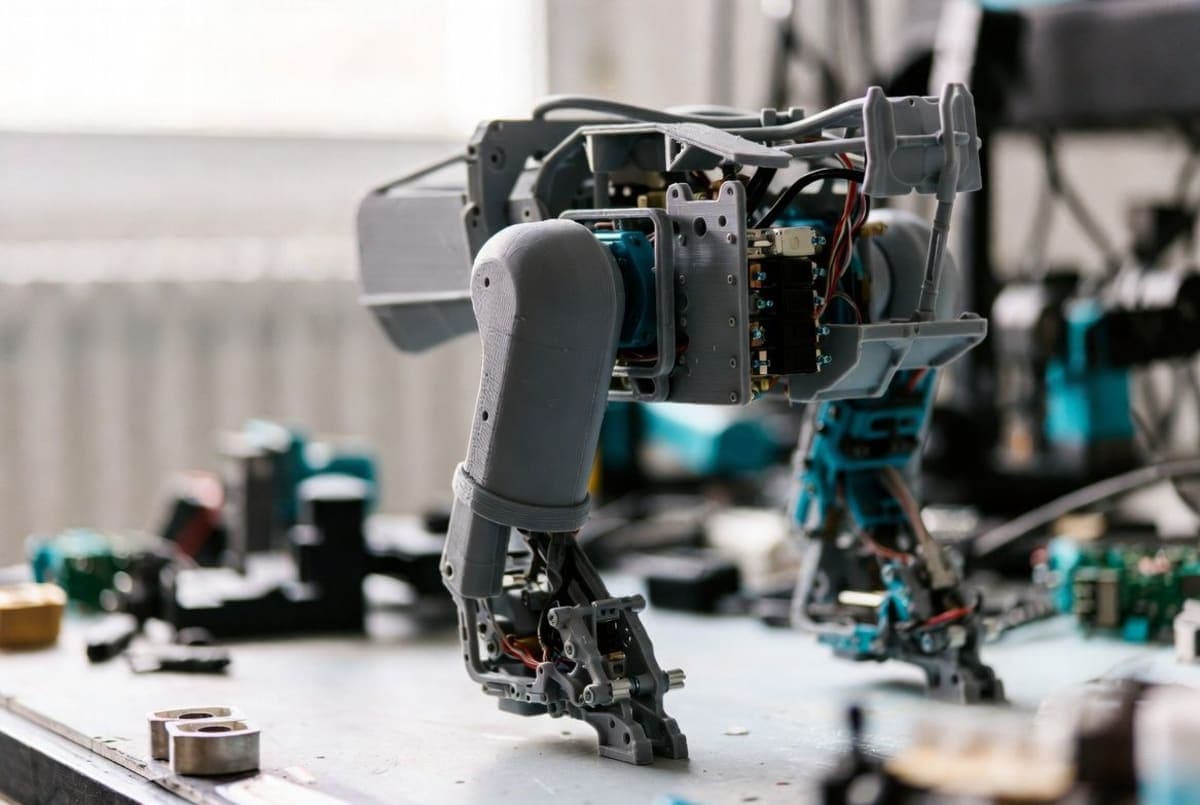

The ecosystem includes hardware kits for edge deployment. These are optional but connect to Part IV of the textbook.

Supported platforms include Arduino Nano 33 BLE Sense, Raspberry Pi, and SparkFun Edge. The labs have you deploy actual models to these devices, which makes the deployment chapters more concrete.

I haven't gone through the hardware labs myself, so I can't speak to the experience. The documentation points to mlsysbook.org/kits for setup instructions.

Troubleshooting

Module tests fail unexpectedly: Run tito test <module_name> to isolate which module is breaking. The tests use pytest under the hood, so you can add --verbose for detailed output.

"tito: command not found" after setup: The virtual environment needs activation. Run source .venv/bin/activate from the tinytorch directory.

Autograd gradients don't match expected values: The ABOUT.md for Module 05 includes test cases. Common issues are forgetting to handle gradient accumulation or incorrectly implementing the backward pass for matmul.

PDF/EPUB download returns 403: The direct download URLs occasionally change. Check the GitHub README for current links.

What's Next

Once you've completed the foundation modules, you have a few directions: continue through the TinyTorch curriculum toward transformers and optimization, explore the hardware labs if you have devices, or use the textbook as reference while building your own projects.

The textbook is scheduled for MIT Press publication in 2026. The current online version is actively maintained, with community contributions accepted via GitHub.

PRO TIPS

The tito checkpoint command tests groups of modules together: tito checkpoint test 01 validates foundation modules, tito checkpoint test 05 validates through autograd.

Grep the document.xml in unpacked directories to understand how tracked changes work if you want to contribute edits.

Each module's ABOUT.md has "Reflect" questions about systems tradeoffs (memory scaling, computational complexity). These connect module implementation to textbook concepts.

FAQ

Q: Do I need GPU access for TinyTorch? A: No. The implementations are CPU-only Python. Part of the point is understanding computational costs when you don't have optimized CUDA kernels hiding them.

Q: How does this compare to Stanford CS229 or fast.ai? A: Those focus on ML theory and model building. MLSysBook focuses on infrastructure: how frameworks work internally, deployment constraints, production considerations. They're complementary, not competing.

Q: Can I use this for academic credit? A: The course originated at Harvard as CS249r. Some universities may accept it as independent study material. There's an NBGrader integration planned for 2026 that would enable formal assessment.

Q: Is the content up to date? A: The GitHub shows active commits through late 2024. Foundational chapters (Parts I-III) are stable. Frontier chapters (Part VI, covering generative AI) get updated more frequently.

RESOURCES

- MLSysBook.ai: Online textbook with interactive features

- TinyTorch GitHub: Framework source and module notebooks

- Harvard CS249r Book Repo: Main textbook repository

- TensorFlow Blog Post: Overview mapping textbook concepts to TF ecosystem