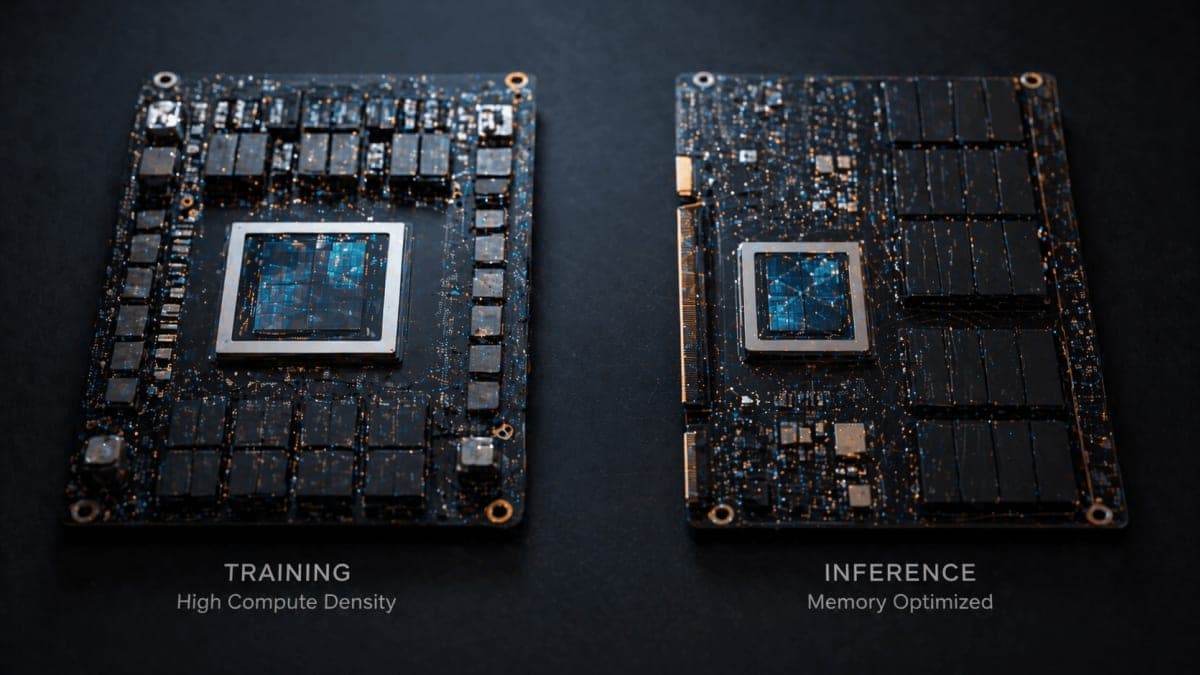

Google unveiled the eighth generation of its Tensor Processing Units at Cloud Next 2026 on Wednesday, splitting the lineup into two dedicated chips: TPU 8t for training and TPU 8i for inference. The move retires the Ironwood v7 family that went generally available earlier this year.

The 8t, codenamed Sunfish, scales to 9,600-chip superpods delivering 121 exaflops and two petabytes of shared high-bandwidth memory, per Google's numbers. The company claims 2.8x better price-performance than Ironwood and near-linear scaling up to a million chips in a single logical cluster. Those figures are self-reported. Independent benchmarks will follow.

The 8i (Zebrafish) targets cost-efficient serving. Each chip pairs 288 GB of HBM with 384 MB of on-chip SRAM, triple the prior generation, to keep active model weights closer to compute. Google claims 80% better performance per dollar. Interconnect bandwidth jumps to 19.2 Tb/s.

Broadcom designs the 8t while MediaTek handles the 8i. Both chips integrate tightly with Google's custom Axion Arm-based CPUs. The architectural split matches a broader shift as inference workloads, driven by agent deployments, start to dwarf training compute. Anthropic committed to up to one million TPU chips last October. Meta signed a multi-billion-dollar capacity deal shortly after.

Google didn't disclose pricing or a hard availability date beyond saying systems go general availability later this year. Nvidia GPUs stay on the Google Cloud menu for customers who want them.

Bottom Line

Google says TPU 8t superpods hit 121 exaflops across 9,600 chips, with independent benchmarks still pending.

Quick Facts

- TPU 8t (Sunfish): 2.8x better price-performance vs Ironwood, company-reported

- TPU 8i (Zebrafish): 80% better performance per dollar, company-reported

- 8t superpod: 9,600 chips, 121 exaflops, 2 PB shared HBM

- 8i: 288 GB HBM, 384 MB on-chip SRAM (3x prior generation)

- Designers: Broadcom (8t) and MediaTek (8i)