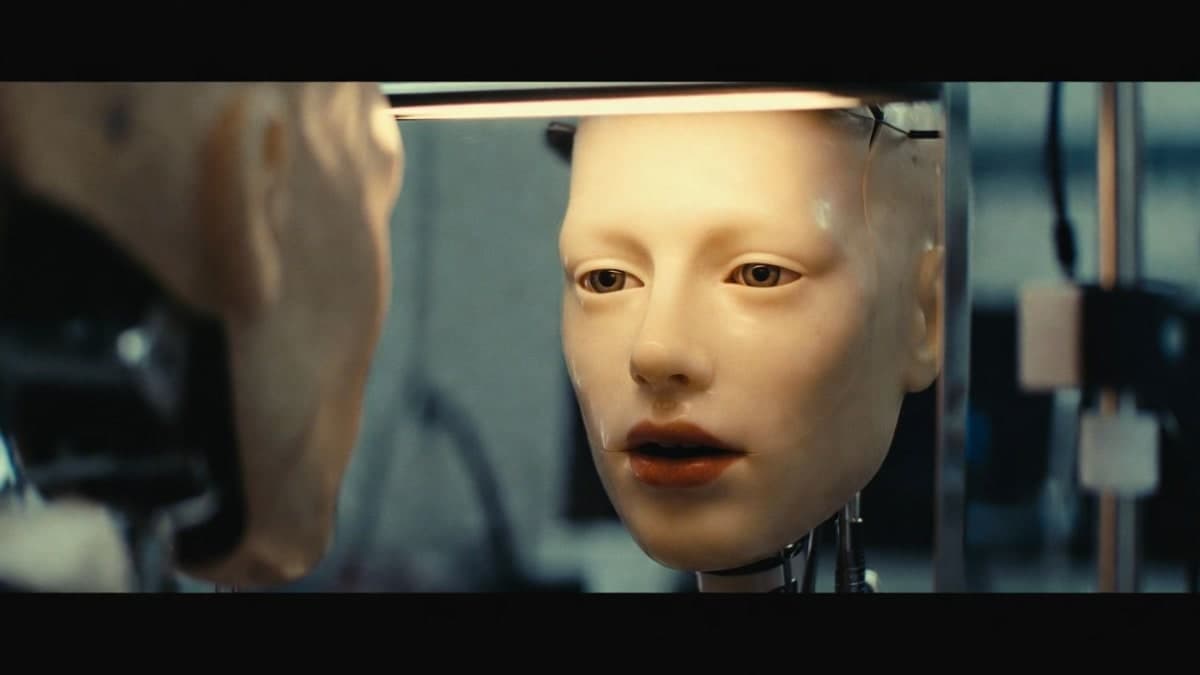

Researchers at Columbia Engineering have built a robot that can lip sync speech and singing without being explicitly programmed how to move its mouth. The robot, called Emo, learned the skill by first practicing random facial expressions in front of a mirror, then watching hours of YouTube videos of people talking. The work was published in Science Robotics on January 14, 2026.

The problem nobody talks about

While companies like Boston Dynamics, Tesla, and Figure AI pour billions into making robots walk and grasp objects, most humanoid faces remain stuck somewhere between a department store mannequin and a haunted doll. And that matters more than the industry seems to realize.

Nearly half of human attention during conversation goes to watching lip movements. We're incredibly sensitive to mismatches between what we hear and what we see. Even slight errors trigger the so-called uncanny valley, that visceral discomfort when something looks almost human but not quite right. Japanese roboticist Masahiro Mori identified this phenomenon back in 1970, and decades later, robotics still hasn't cracked it.

The Columbia Engineering team, led by Hod Lipson and PhD student Yuhang Hu, took a fundamentally different approach than most. Instead of hard-coding rules for how mouths should move when making specific sounds, they let the robot figure it out through observation.

How Emo taught itself

The training happened in two phases. First, the researchers placed Emo in front of a mirror and had it generate thousands of random facial expressions while watching itself. Over time, the robot built what the team calls a "vision-to-action" model, essentially learning which motor activations produce which facial appearances.

Then came the YouTube binge. Emo watched hours of videos showing people speaking and singing in multiple languages. The AI learned to map audio waveforms to mouth shapes, bypassing language entirely. No translation required. The system analyzed the raw relationship between sounds and lip positions.

The hardware itself represents a significant upgrade from the lab's earlier platform, Eva, which had only 10 actuators. Emo packs 26 motors beneath flexible silicone skin, controlled by direct-attached magnets that deform the surface. High-resolution cameras sit embedded in each eye pupil, enabling real-time visual perception.

What it can actually do

The robot demonstrated lip syncing across multiple languages, including French, Arabic, and Chinese, none of which were specifically trained. It even performed a track from an AI-generated album called "hello world_," handling the rhythm changes and stretched vowels that make singing particularly difficult.

But the researchers are upfront about limitations. Hard consonants like "B" remain problematic, as do puckered sounds like "W." The lip motion is far from perfect. Whether these specific difficulties stem from hardware constraints or training data gaps isn't entirely clear from what the team has published.

The research paper shows the method outperforming five existing approaches in matching robot mouth movements to reference videos. Tests across 11 non-English languages with different phonetic structures showed the framework could generalize without language-specific training. Those are impressive numbers, but benchmark comparisons in robotics papers deserve some skepticism since test conditions vary wildly.

A missing link?

Lipson frames facial expression as robotics' "missing link," arguing that the field's obsession with locomotion and manipulation has left an entire communication channel untapped. There's something to this. Current humanoid efforts from Tesla, Boston Dynamics, and others focus overwhelmingly on legs and hands. Faces remain an afterthought, if they're considered at all.

The vision the team is selling: combine this adaptive lip sync with large language models like ChatGPT or Gemini, and you get robots capable of genuine emotional connection. As Hu put it, the longer the context window of a conversation, the more context-sensitive the facial gestures become.

That's speculative, of course. But the underlying technical achievement is real. A robot that learns to coordinate its face with speech by observation rather than programming represents a shift in approach that could matter as humanoids move into eldercare, education, and other settings where human connection isn't optional.

The researchers acknowledge the obvious concerns. Creating machines that can emotionally engage with humans raises questions they don't have answers for. Lipson's position is cautious optimism: go slowly, be careful, reap benefits while minimizing risks. Whether the industry will actually exercise that restraint is another question entirely.

Funding came from the NSF AI Institute for Dynamical Systems and a gift from Amazon to Columbia's AI institute. The research data is available on Dryad.