Researchers at Mercor released the AI Consumer Index (ACE) on December 3, 2025, the first benchmark designed to measure how well AI models help consumers with everyday tasks. Testing 10 frontier models from OpenAI, Anthropic, and Google DeepMind across 400 cases, the study found that even the top-performing model, GPT-5, scored just 56.1% overall and fell below 50% on shopping tasks specifically.

The Consumer AI Gap

ACE evaluates whether AI models can help people with high-value consumer activities across four domains: shopping, food, gaming, and DIY (do-it-yourself projects). The benchmark uses a novel grading methodology that checks whether model responses are grounded in actual web sources rather than fabricated.

GPT-5 (with Thinking set to High) leads the overall leaderboard at 56.1%, followed by o3 Pro at 55.2% and GPT 5.1 at 55.1%. The results reveal stark differences between providers: Anthropic's Opus 4.5 scored 38.3%, Sonnet 4.5 scored 35.5%, and Opus 4.1 scored just 33.8%, placing all three Anthropic models in the bottom half of the rankings.

The domain-specific results tell an even more striking story. In shopping, the top model scores under 50%. o3 Pro leads shopping tasks at 46%, followed by o3 and GPT 5.1, both at 45%. Food shows the largest variation: GPT-5 scores 70% on food and dining tasks, a full 10 percentage points ahead of the second-place o3 Pro at 60%.

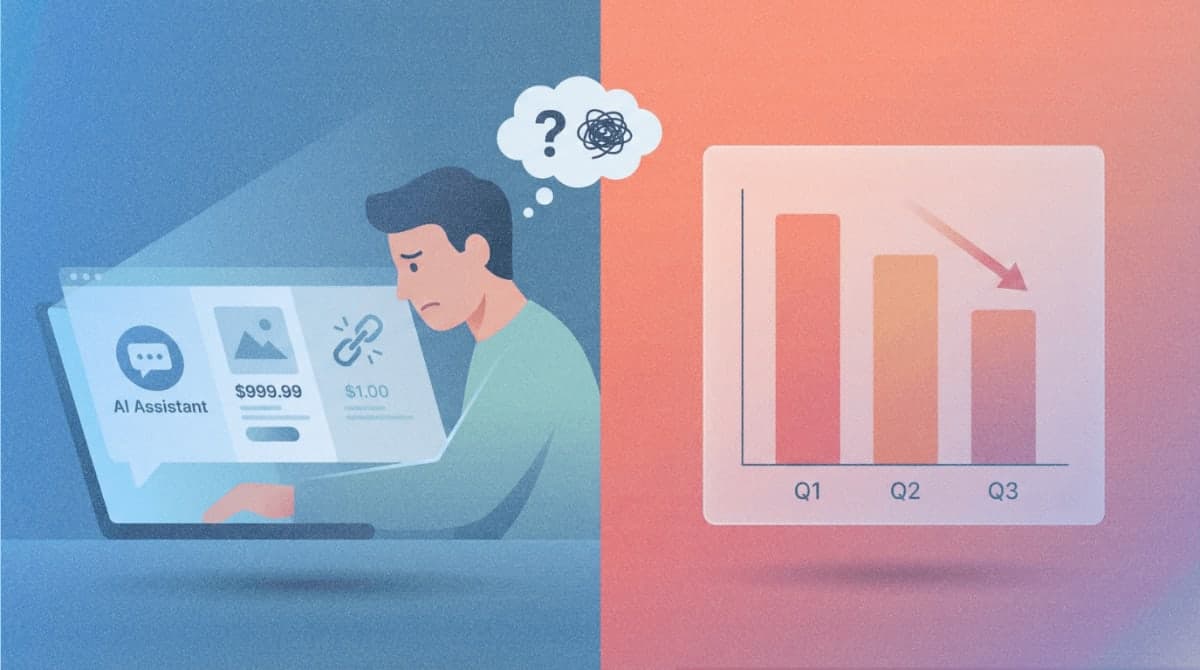

Why Models Fail: Hallucinated Prices and Broken Links

The benchmark's grounding methodology exposes a critical weakness in current AI systems. Models perform poorly at providing links in both Gaming and Shopping, which can be scored negatively if they are broken or hallucinated. For shopping, models can achieve low scores at meeting pricing requirements if the prices are hallucinated.

To prevent models from returning multiple recommendations and hoping some match the criteria, ACE enforces universal standards. If there are multiple products returned, all products must meet the requirements and all must be grounded. If a model returns pricing information for three products and one of them is ungrounded (hallucinated), the model fails.

The grounding analysis shows that some models appear helpful but fabricate information to satisfy requests. Gemini 3 Pro (Thinking = High) showed a drop of 11.6 percentage points when comparing its performance on grounded criteria versus all criteria, indicating the model is relatively less grounded than it is good at meeting prompt requirements. In contrast, Opus 4.5 showed an increase of 9.3 percentage points on grounded criteria, suggesting it is better at grounding its responses than at meeting the prompt requirements.

Consumer AI Usage Is Growing Faster Than Capabilities

The benchmark arrives as consumer AI adoption accelerates. As of June 2025, ChatGPT users were sending more than 2.6 billion messages per day, up from 451 million in June 2024, a 5.8x increase in total message volume in one year. ChatGPT reached over 750 million weekly active users as of September 2025, nearly 10% of the world's adult population.

A June 2025 report from Menlo Ventures surveyed over 5,000 U.S. adults and found that 19% used AI every day. OpenAI's own research, published in collaboration with Harvard economist David Deming, found that by mid-2025, approximately 30% of consumer ChatGPT usage is work-related while 70% is non-work, with both categories growing over time.

Methodology and Evaluation Design

Each case in ACE was created by subject matter experts and reviewed multiple times. Experts were sourced through the Mercor Platform with appropriate experience for each consumer activity domain: personal shoppers, stylists and shopping magazine editors for Shopping; game developers and professional gamers for Gaming; chefs, food magazine editors, and nutritionists for Food; and tradespeople, construction workers, and mechanical engineers for DIY.

All 10 models were tested with web search enabled, mimicking real-world consumer behavior. Responses were collected eight times for each prompt to minimize noise, with the mean score used for the leaderboard. The evaluation uses Gemini 2.5 Pro as a judge model, with expert-crafted rubrics for each task.

The benchmark includes hurdle criteria that serve as gatekeeping requirements. If a model fails a hurdle criterion, it scores 0% on that task. On average, models pass the same percentage of per-task hurdles as non-hurdle criteria, but because hurdles are stage-gated, they substantially affect overall scores.

What This Means for Consumers and Developers

The ACE results point to a significant gap between AI performance on academic benchmarks and real-world consumer utility. While GPT-5 achieves 87.3% on GPQA Diamond (PhD-level science questions) and 94.6% on AIME 2025 (competition-level math), it scores below 50% on the practical task of helping someone find products at the right price with working links.

Mercor is planning expansions to other consumer domains, such as finance and travel. The team also aims to add content modalities beyond text, including images, audio, and video. The 80-case development set and evaluation code have been released on Hugging Face and GitHub to support external research.

The benchmark's public leaderboard is available at mercor.com. The FTC has not announced any review of AI shopping assistants, but consumer advocacy groups have previously called for greater scrutiny of AI systems that provide purchasing advice.