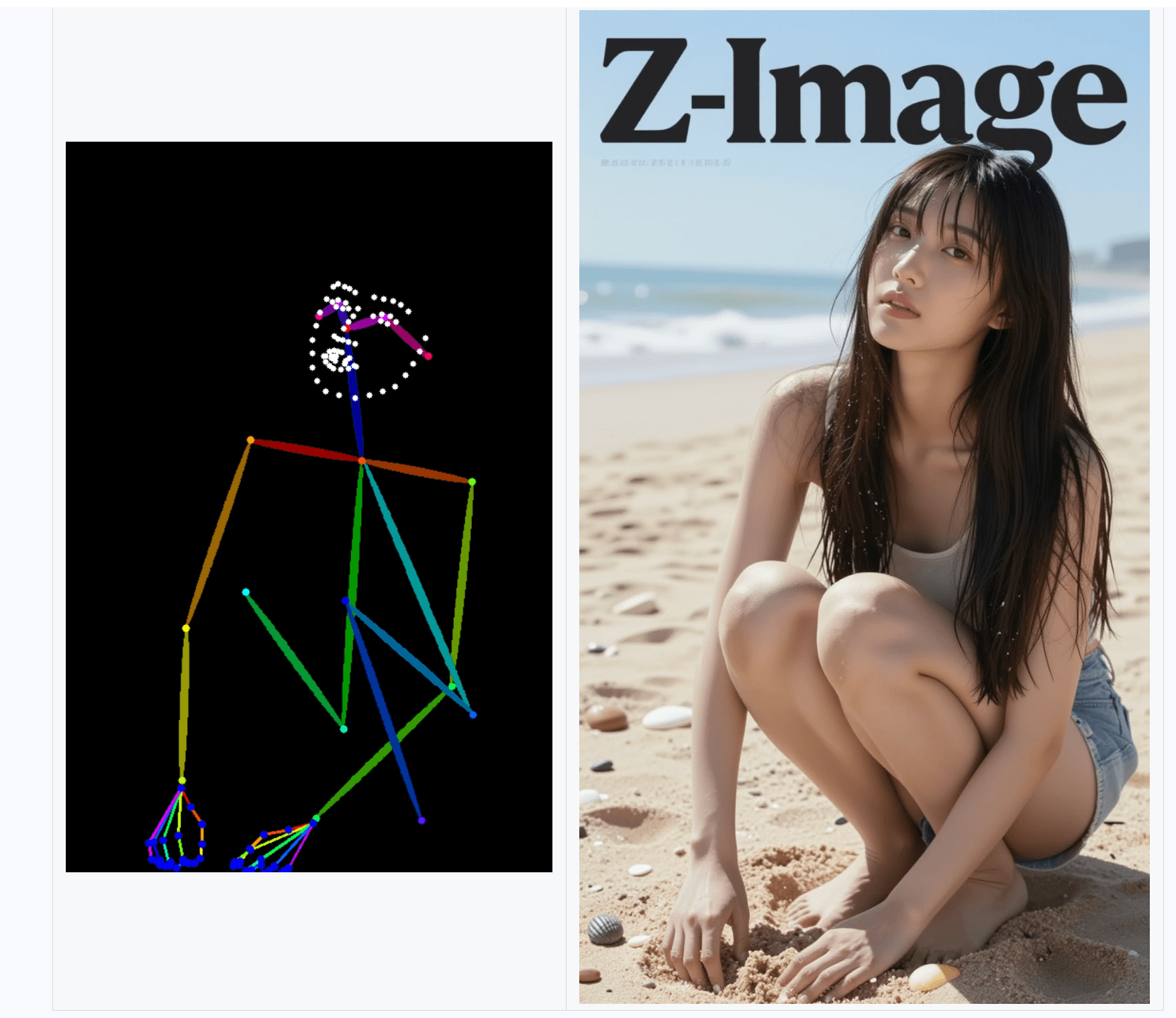

Alibaba's PAI team pushed a significant update to Z-Image-Turbo-Fun-Controlnet-Union 2.1 this week. The main fixes target two problems that plagued the previous release: masks that bled into surrounding areas during inpainting, and bright artifacts that appeared when cranking up control strength values.

The team traced both issues to training problems. Insufficient mask randomness caused the model to learn mask patterns rather than ignore them, leading to auto-fill behavior during removal tasks. Separately, overfitting between the control and tile distillation stages produced those visible bright spots at higher control_context_scale values. The retrained models address both.

A new Lite variant weighs in at 1.9GB, down from the full model, with control applied across 5 layers instead of 17. The tradeoff is weaker conditioning, but the team reports it produces more natural blending in some scenarios. The dataset restructuring is notable too: training images now span 512 to 1536 pixels rather than a fixed 512px, which should improve robustness across resolutions.

The 8-step distilled version remains the recommended choice for most users. It restores the fast inference that Z-Image-Turbo originally promised but lost when ControlNet was first bolted on.

The Bottom Line: Practical fixes for real workflow problems, plus a lighter option for users running consumer GPUs.

QUICK FACTS

- Lite model size: 1.9GB (5 layers vs 17 in full version)

- Training resolution range: 512-1536px (previously fixed at 512px)

- Recommended inference: 8 steps for distilled model

- Optimal control_context_scale range: 0.65-0.90

- License: Apache 2.0