Researchers at MIT's Improbable AI Lab have published a paper introducing SEAL (Self-Adapting Language Models), a framework that lets language models generate their own training data and then fine-tune themselves on it. The paper first dropped in June 2025, got an update in September, and the code is now public under MIT license.

Here's the part that caught my attention: one of the authors, Ekin Akyürek, is now at OpenAI. He finished his MIT PhD in April 2025 and joined their research team. The other authors are still at MIT. Make of that what you will.

The student analogy they keep using

The core intuition behind SEAL is this: humans don't memorize textbooks verbatim. We take notes. We rephrase things. We make flashcards. Models, apparently, should do the same.

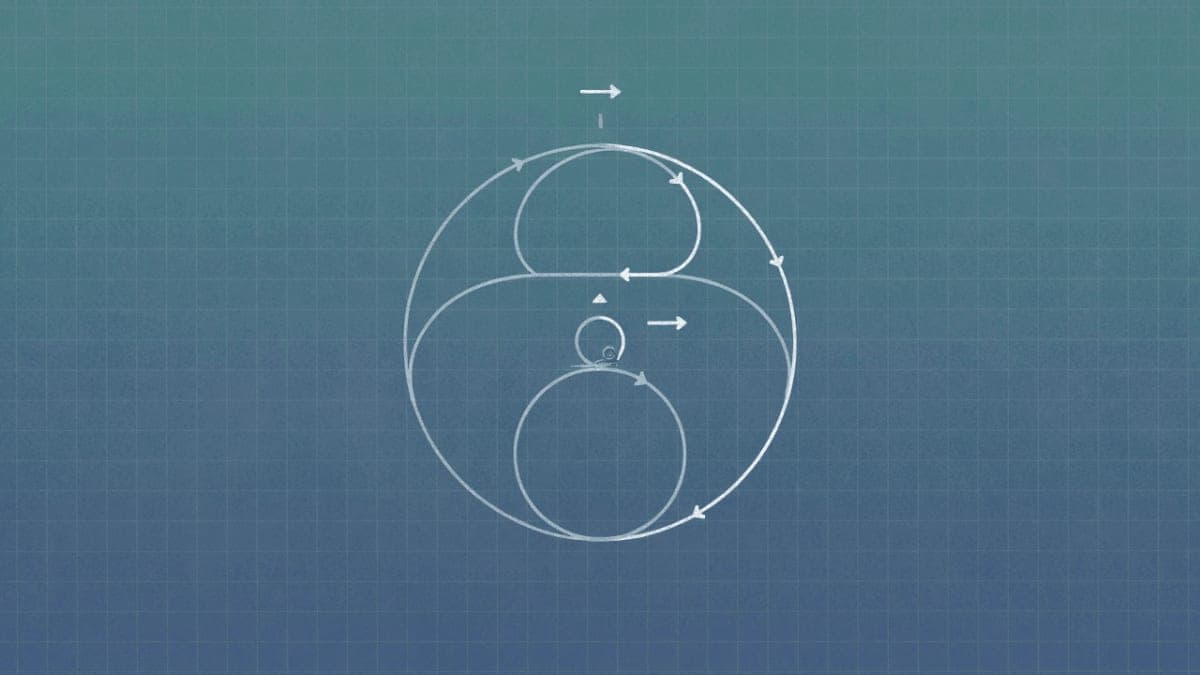

So instead of fine-tuning a model directly on raw text (which barely helps, as it turns out), SEAL trains the model to generate "self-edits": reformulated versions of the information that are supposedly easier to learn from. The model then fine-tunes itself on these self-edits using LoRA. An outer reinforcement learning loop rewards self-edits that actually improve downstream performance.

The nested structure is a bit much to follow at first. There's an inner loop (generate self-edit, apply fine-tuning) and an outer loop (evaluate, reward good self-edits, update the policy). They use ReST-EM from DeepMind for the outer optimization because PPO and GRPO were "unstable." I'll spare you the details.

The numbers

They tested this on two tasks. The first: teaching a Qwen2.5-7B model to answer questions about SQuAD passages without having the passage in context. Just memorize the facts, basically.

Base model, no adaptation: 32.7% accuracy. Fine-tuning on the raw passage: 33.5%. So direct fine-tuning does almost nothing. Interesting.

Adding synthetic "implications" generated by the base model bumps it to 39.7%. Using GPT-4.1 to generate those implications gets you 46.3%. But SEAL, after two rounds of RL, hits 47.0%. A 7B model beats synthetic data from GPT-4.1. That's the headline number they're pushing, and it's legitimately surprising.

The second test was on a simplified subset of ARC-AGI, the abstract reasoning benchmark. Here SEAL achieved 72.5% success, compared to 20% for test-time training without the RL loop, and literally 0% for plain in-context learning. The "Oracle" baseline with perfectly tuned settings hit 100%, so there's room to grow.

What they're not saying

The paper is honest about computational cost but buries it a bit. Each self-edit evaluation takes 30-45 seconds. They ran 750 iterations across two rounds. That's roughly 6 hours on two H100s just for the RL training. Per task. The actual fine-tuning is fast because they use LoRA, but the outer loop is brutal.

And there's catastrophic forgetting. They admit this openly: as you apply more self-edits, performance on earlier tasks degrades. They tried reward shaping to mitigate it. Didn't fully work. The paper's future work section waves toward "replay, constrained updates, or representational superposition" as potential fixes.

The real question

Whether this is practically useful remains unclear. The scenario they're testing, memorizing facts from passages without retrieval, isn't how most production systems work. You'd just use RAG. But as a research direction toward models that can actually learn post-deployment, the results are suggestive.

The vision they sketch at the end is ambitious: models that decide mid-inference whether they need a self-edit, models that distill their chain-of-thought into permanent weights. We're not there. But a 7B model outperforming GPT-4.1 synthetic data on its home turf is the kind of result that gets people's attention.

Updated code dropped in October. Expect more papers building on this by spring.