Shanghai Artificial Intelligence Laboratory released the Science Context Protocol (SCP), an open-source standard designed to connect AI agents with scientific instruments, databases, and computational tools across institutional boundaries. The technical paper published December 30, 2025 positions SCP as a scientific extension of Anthropic's Model Context Protocol.

Where MCP falls short

MCP became the de facto standard for connecting AI models to external data sources after Anthropic open-sourced it in November 2024. The protocol works well for general tool interactions, but the Shanghai researchers argue it lacks critical features for scientific workflows: structured representation of complete experiment protocols, support for high-throughput experiments with many parallel runs, and coordination of multiple specialized AI agents.

The comparison is stark. MCP handles tool invocations as stateless, context-agnostic operations. Running a batch of 100 experiments requires external scheduling logic. SCP adds what the researchers call "a higher-level grammar for scientific experimentation," which sounds like marketing-speak until you see the GitHub repo and realize they've actually built the orchestration layer.

Hub-and-spoke, not peer-to-peer

SCP replaces MCP's peer-to-peer communication with a centralized hub architecture. The SCP Hub acts as the "brain," serving as a global registry for tools, datasets, agents, and instruments while handling intent parsing, workflow generation, and task scheduling. Distributed SCP Servers sit at edge nodes, interfacing with local resources.

The system generates multiple executable plans for a given research goal and presents the most promising options with rationales covering dependency structure, expected duration, experimental risk, and cost estimates. Whether this automated planning actually works in messy real-world lab environments remains an open question.

Selected workflows get stored as structured JSON that serves as a contract between all participants. During execution, the hub monitors progress, validates results, and can trigger fallback strategies when anomalies occur, which matters most for multi-stage workflows combining simulations with physical experiments.

1,600 tools and counting

The team built their Internal Discovery Platform on SCP, offering over 1,600 interoperable tools. Biology dominates at 45.9%, followed by physics at 21.1% and chemistry at 11.6%. The remainder covers mechanics and materials science, mathematics, and computer science.

By function, computational tools lead at 39.1%, databases at 33.8%. Lab operations account for just 7.7%, suggesting the wet lab integration is more aspirational than comprehensive at this stage.

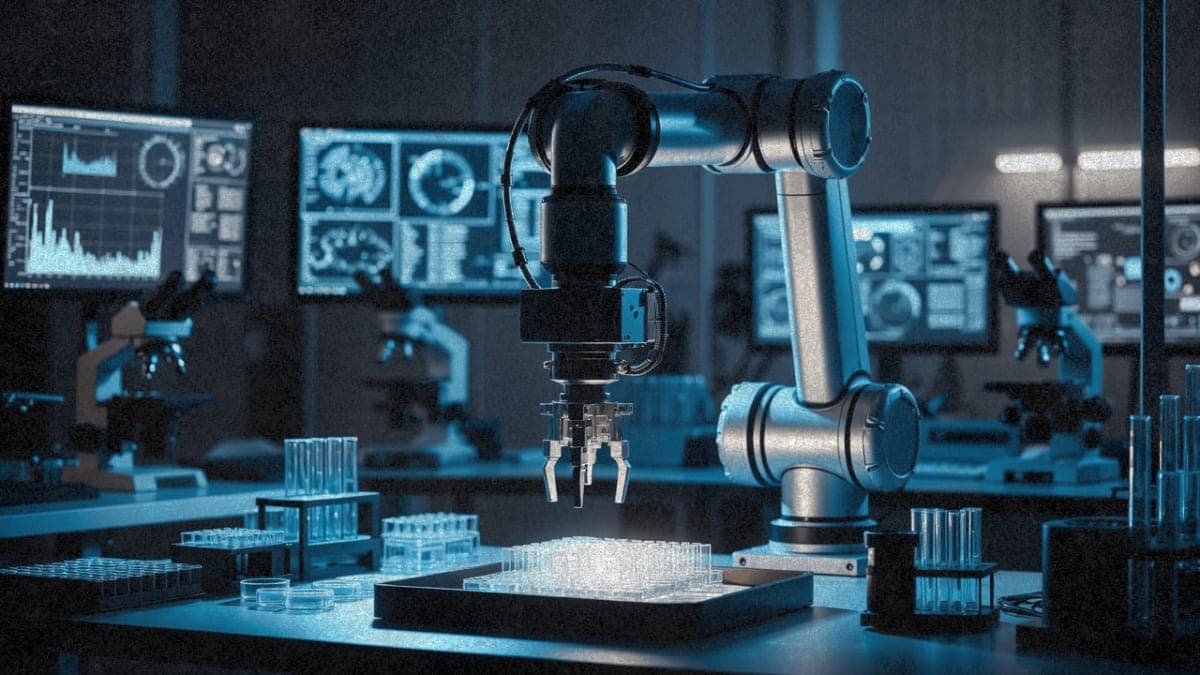

Tools range from protein structure prediction and molecular docking to automated pipetting instructions for lab robots. The protocol defines standardized device drivers for integrating instruments, though the actual adoption among equipment manufacturers remains unclear.

The dry-wet integration promise

The case studies outlined in the paper sound impressive on paper. In one scenario, a scientist uploads a PDF containing a lab protocol. The system extracts experimental steps, converts them to machine-readable format, and runs the experiment on a robotic platform with validation and error handling.

Another demo shows AI-controlled drug screening: starting with 50 molecules, the system calculates drug-likeness scores and toxicity values, filters by defined criteria, prepares a protein structure for docking, and identifies two promising candidates. The entire process runs as an orchestrated workflow where various SCP servers collaborate on database queries, calculations, and structural analyses.

The fluorescent protein engineering case study demonstrates what the researchers call "dry-wet integration," where computational design, automated experimentation, and iterative optimization happen within a single, reproducible pipeline. SCP provides the orchestration layer connecting these steps.

What's missing

The paper doesn't address several obvious concerns. Security and access control get mentioned but the details are thin. Fine-grained authentication and authorization are promised, but how this works across institutional boundaries with varying data governance requirements isn't clear.

The tool ecosystem skews heavily toward computational resources. Physical lab integration, the headline feature, represents a small fraction of current capabilities. And the case studies demonstrate potential rather than proven deployments.

The protocol is released under the Apache License 2.0. For researchers interested in testing, the team points to the SCP Square on their Intern-Discovery platform, where they host a ready-to-use SCP Hub with a public registration interface.