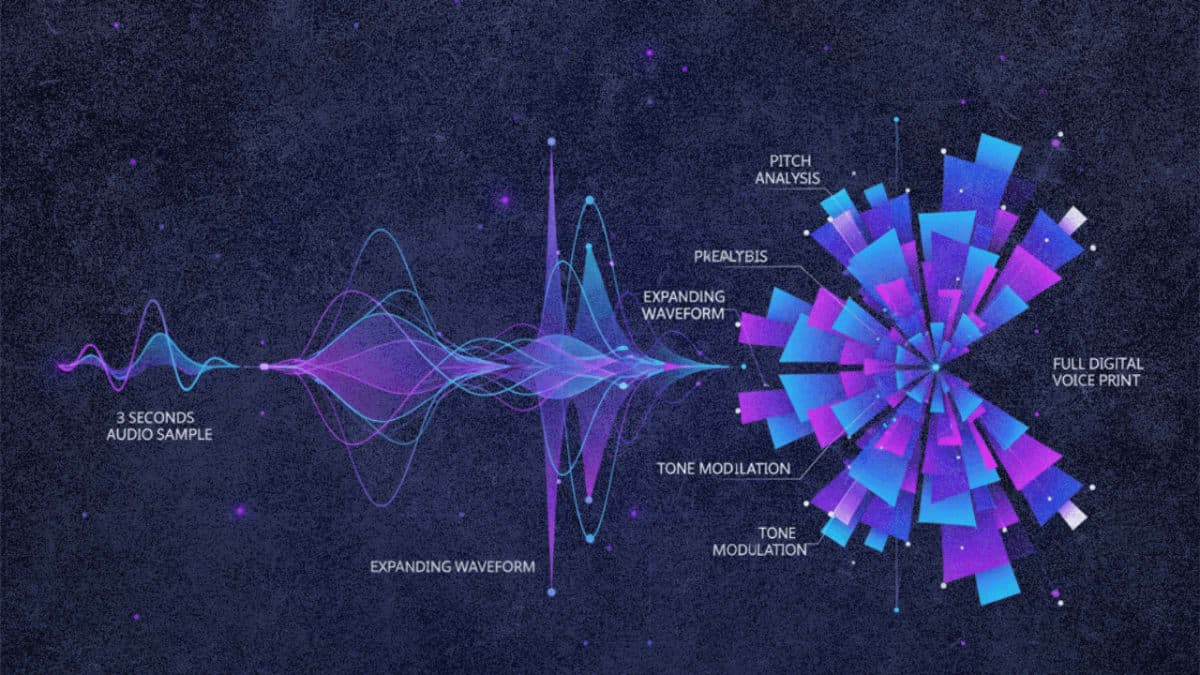

Alibaba's Qwen team dropped two new text-to-speech models today that split voice generation into distinct approaches. Qwen3-TTS-VD-Flash lets users describe a voice in plain language and the model generates it from scratch. Qwen3-TTS-VC-Flash takes the opposite route: feed it three seconds of anyone's voice and it reproduces it across ten languages.

The VoiceDesign model accepts prompts like "male, middle-aged, booming baritone with rapid-fire delivery." Qwen claims it outperforms GPT-4o mini-tts on role-play benchmarks, though the company hasn't released detailed methodology. The VoiceClone model, according to Qwen's own testing, achieves lower word error rates than ElevenLabs and MiniMax in multilingual evaluations. Independent verification is pending.

Both models are available now through Alibaba Cloud's API, with free demos on Hugging Face. This expands Qwen's existing TTS lineup, which already includes the Qwen3-TTS-Flash model with 49 preset voices. That model launched in late November and supports 10 languages plus 9 Chinese dialects.

The release lands as competition intensifies in commercial voice AI. ElevenLabs, OpenAI, and Google all offer voice cloning or customization, but few match Qwen's claimed 3-second sample requirement. The models can also handle animal sounds and extract voices from noisy recordings, per Alibaba.

The Bottom Line: Alibaba now offers voice creation from text descriptions and voice cloning from 3-second samples, both via the same API.

QUICK FACTS

- Voice cloning requires 3 seconds of source audio

- VoiceClone supports 10 languages (Chinese, English, Japanese, Spanish, others)

- VoiceDesign creates voices from natural language descriptions

- Both available through Alibaba Cloud API

- Word error rate claims are company-reported, not independently verified