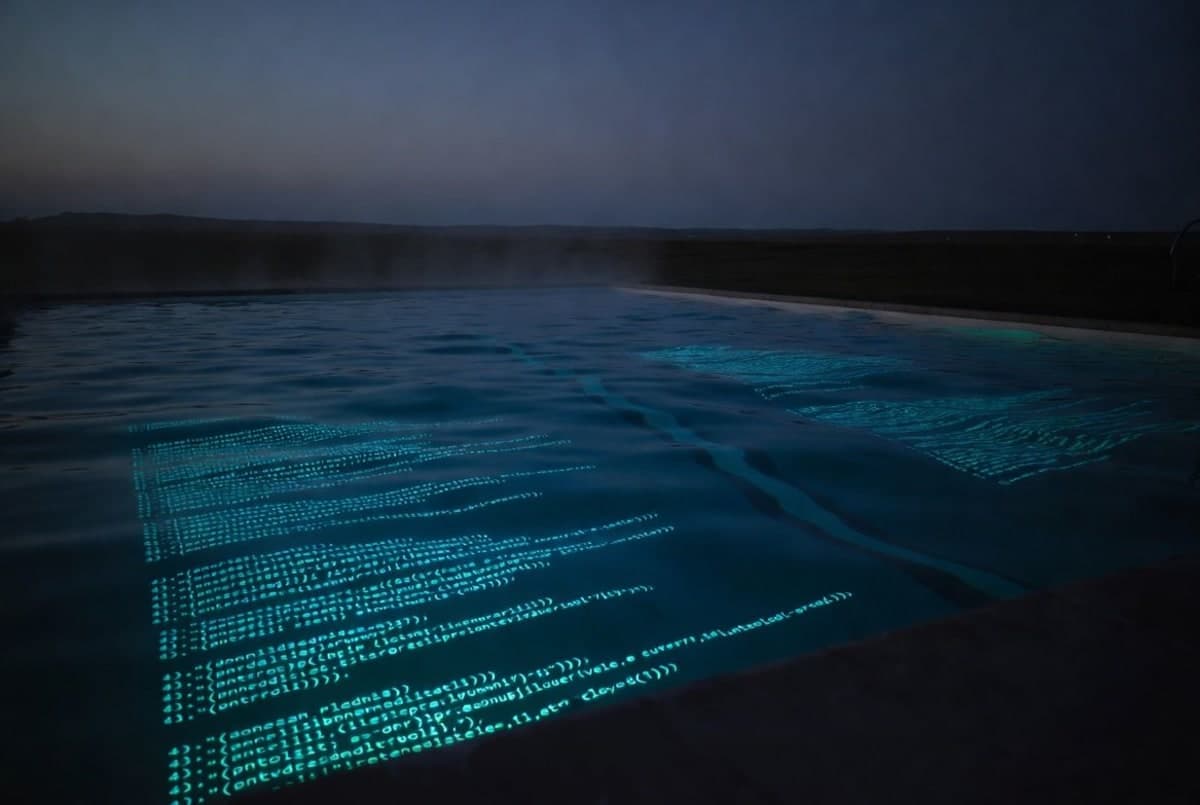

Poolside, the well-funded coding-AI startup that has spent most of its existence selling to defense and government buyers, opened public access to its Laguna model family this month. The release covers two models: Laguna M.1, a 225-billion-parameter Mixture-of-Experts flagship with 23B active parameters, and Laguna XS.2, a smaller 33B/3B open-weight sibling under Apache 2.0. Both showed up on OpenRouter and Hugging Face the same day.

Founded in April 2023 by ex-GitHub CTO Jason Warner, who oversaw the Copilot launch, and source{d} founder Eiso Kant, Poolside has raised north of $626 million, hitting a $3 billion valuation in late 2024 before Nvidia announced up to $1 billion more around a year later. Until now, almost none of that work has been visible outside customer deployments at places like RTX Corporation.

The smaller model is the actually interesting one

Look, M.1 is a fine model. Poolside's technical post says it scored 72.5% on SWE-bench Verified, 46.9% on SWE-bench Pro, and 40.7% on Terminal-Bench 2.0. Solid numbers. But the frontier on SWE-bench Verified currently sits closer to 88% (GPT-5.5) and 87% (Claude Opus 4.7), with Claude Sonnet 4.6 leading the dense-model category at 79.6%. M.1 finished pre-training at the end of last year, and frankly, you can tell.

XS.2 is where the story gets interesting. It's a 33B-total / 3B-active MoE that hits 68.2% on SWE-bench Verified and 44.5% on SWE-bench Pro. For a model that runs on a Mac with 36GB of RAM via Ollama, those numbers are genuinely impressive. On SWE-bench Pro it edges out Claude Haiku 4.5 (39.5%) and the substantially larger dense Gemma 4 31B (35.7%). It only trails M.1 by a couple of points despite being roughly a tenth the size on paper.

The Apache 2.0 license is the other thing. Weights are on Hugging Face, with day-one support for vLLM, Transformers, and NVIDIA's TRT-LLM. There's an NVFP4 variant ready for Blackwell. Poolside is also distributing through OpenRouter and Ollama. They're handing the keys over.

About those benchmarks

One thing worth pulling at: Poolside ran all of this on their own infrastructure using the Laude Institute's Harbor Framework with their own agent harness. They averaged three to seven runs depending on the benchmark, with sandboxed execution at 8 GB RAM / 2 CPUs (Terminal-Bench got 48 GB / 32 CPUs). They also "patched" some base task images and verifiers to deal with third-party dependency rate limits. That's defensible. It's also not nothing.

And the competitor numbers? Poolside grabbed the highest published scores for everyone else, which doesn't always reflect like-for-like agent harness conditions. The footnote acknowledges this. So when XS.2 "beats" Haiku 4.5 by five points on SWE-bench Pro, that's an interesting data point, not a settled verdict. I'd want independent third-party evaluations before getting too excited about specific rankings.

Why now?

Poolside has been quiet for almost three years. They built a $12-billion-valued company largely on the promise of government and defense contracts, on-prem deployments, and air-gapped environments where Copilot cannot go. The investor pitch was always that regulated industries need a frontier-tier code model that never leaves the customer's perimeter.

Poolside's launch blog frames the open release as a contribution to the open-weight ecosystem, with the explicit line that "the West needs strong open-weight models." That is a fairly transparent nod to the competitive pressure from DeepSeek, Qwen, and the rest of the Chinese open-weight wave. The defense and public-sector work, the company says, continues alongside.

Whether the roughly 60 people in Poolside's Applied Research org can actually keep up with the open-weight competition is the real question. Both models are the output of that group, built on a custom training stack the company calls Titan, a Muon-optimizer implementation that reportedly cut training steps by 15% versus AdamW, and an AutoMixer system that trained around 60 proxy models to learn the data mix.

What developers get

Both models are free on the Poolside API and OpenRouter for a limited time, no token caps spelled out. M.1 weights are not openly available, but Poolside says it will share them with researchers and institutions on request. XS.2 weights are public, full stop.

The release also includes two product previews: pool, a terminal-based coding agent built as an Agent Client Protocol server (the same harness Poolside uses internally for RL training), and Shimmer, a cloud-based VM sandbox for iterating on apps. pool integrates with Zed and JetBrains automatically.

For a small team building locally and wanting an agent that does not phone home, XS.2 is probably the most interesting open release of the past few weeks. Whether M.1 finds an audience outside Poolside's existing defense customers is the harder question. There are a lot of 70%-on-SWE-bench-Verified models out there now, and most of them have better PR.

Poolside says a technical report is coming. Until then, we have a blog post, a model card, and benchmarks Poolside ran themselves. Make of that what you will.