SEO METADATA

Meta Title: Microsoft Unveils Rho-alpha, Its First AI Model for Robots Meta Description: Microsoft Research announces Rho-alpha, a vision-language-action model that adds tactile sensing to control dual-arm robots via natural language commands. URL Slug: microsoft-rho-alpha-robotics-model Primary Keyword: Microsoft Rho-alpha Secondary Keywords: physical AI, vision-language-action model, robotics AI, bimanual manipulation Tags: ["Microsoft", "robotics", "Rho-alpha", "physical AI", "VLA model", "Phi", "tactile sensing"]

ARTICLE

Microsoft Releases Rho-alpha, Its First Robotics AI Model

New VLA+ model adds tactile sensing to help robots handle physical objects

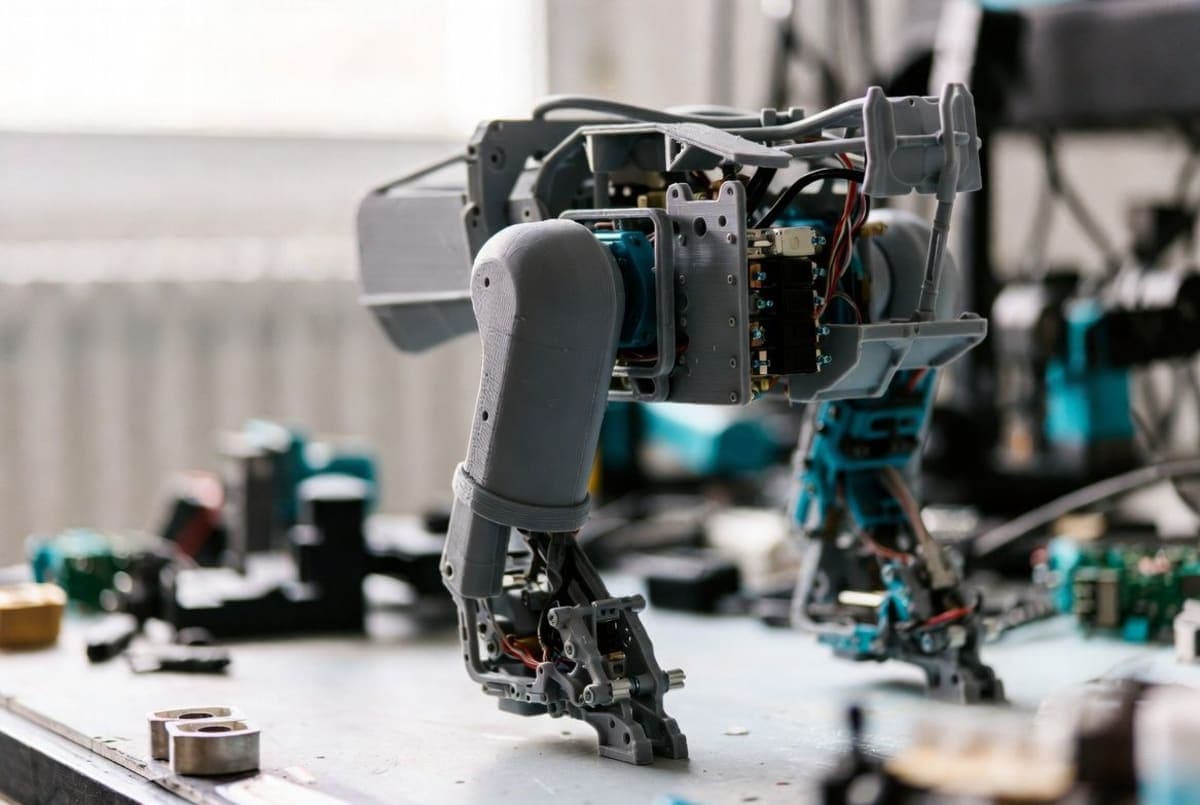

Microsoft Research is entering the robotics foundation model race. The company announced Rho-alpha this week, a vision-language-action model built on Microsoft's Phi family of small language models. It's designed to translate natural language commands into control signals for robots performing two-handed manipulation tasks.

The pitch: Rho-alpha expands beyond typical VLA models by incorporating tactile sensing. Microsoft calls it a "VLA+" approach. Force feedback is reportedly in development. The company claims this helps robots adjust their grip and movements based on actual touch, not just visual cues. "We aim to make physical systems more easily adaptable, viewing adaptability as a hallmark of intelligence," the announcement states, though no independent benchmarks are available yet.

Synthetic data plays a central role. Microsoft is using NVIDIA Isaac Sim on Azure to generate simulated training trajectories, supplementing physical demonstration datasets. The model learns from human corrections during operation via 3D mouse teleoperation. Current testing involves dual-arm setups and humanoid robots. Interested organizations can apply for the early access program; broader availability through Microsoft Foundry is planned but not dated.

The Bottom Line: Microsoft joins Google, OpenAI, and others racing to build foundation models for robotics, betting that multimodal perception and continuous learning can move robots beyond scripted industrial tasks.

QUICK FACTS

- Based on Microsoft's Phi vision-language model series

- Targets bimanual (two-handed) manipulation tasks

- Adds tactile sensing beyond standard VLA perception

- Force feedback support in development

- Available now via research early access program

- Technical paper promised "in the coming months"