Microsoft's AI safety team has released a technical framework for identifying AI-generated and manipulated media, evaluating 60 combinations of verification methods and recommending standards for AI companies and social media platforms. The research report, titled "Media Integrity and Authentication: Status, Directions, and Futures," was shared with MIT Technology Review ahead of publication.

The results aren't exactly comforting. Of those 60 combinations, only 20 achieve what the report calls "high-confidence authentication." The rest deliver unreliable results or no useful conclusions at all.

Three methods, each broken on its own

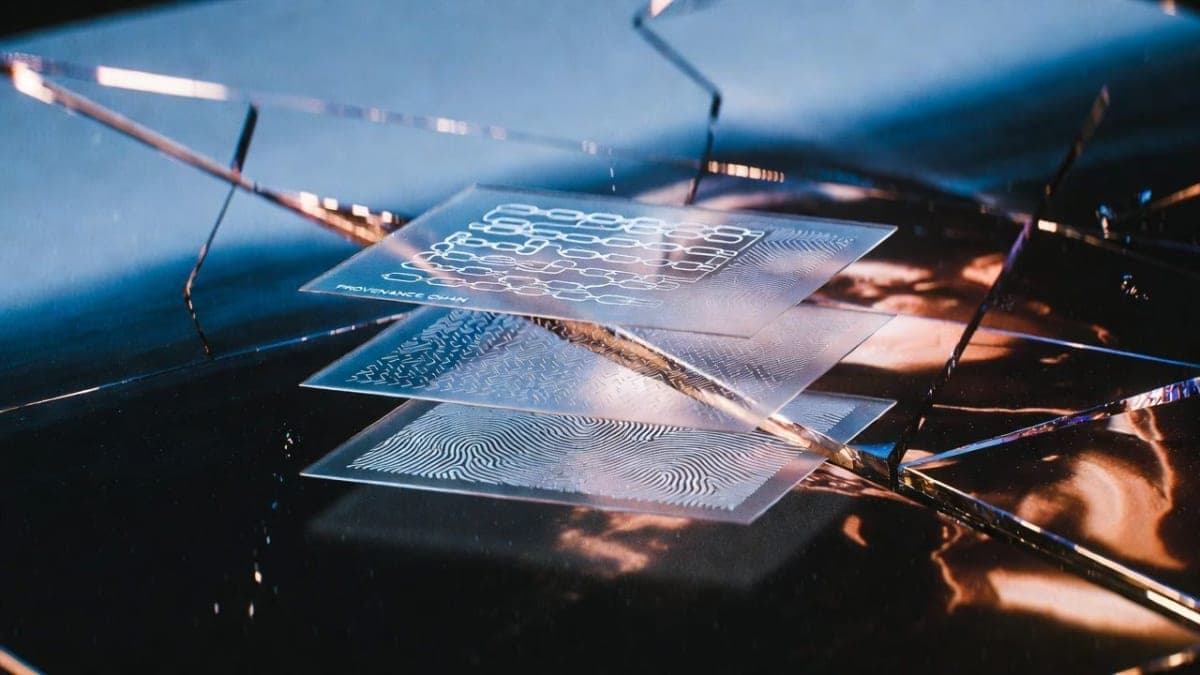

The report, produced under Microsoft's LASER program for long-term AI safety and led by Chief Scientific Officer Eric Horvitz, evaluates three core verification technologies: cryptographically secured provenance metadata (built on the open C2PA standard Microsoft co-founded in 2021), invisible watermarks, and digital fingerprints based on soft-hash techniques.

Think of it like authenticating a Rembrandt, as Horvitz put it. You track the painting's ownership history (provenance), add a mark invisible to the naked eye but readable by machines (watermark), and scan the brushwork to create a mathematical signature (fingerprint). Layered together, they're supposed to help a skeptical viewer verify the original.

Except none of them work reliably alone. Provenance metadata can be stripped with a screenshot. Watermarks are probabilistic, meaning they trigger false alarms and miss forgeries. Fingerprints suffer from hash collisions and steep storage costs. The report flags something lawmakers keep missing: even validated provenance data doesn't prove content is true. It only proves it hasn't been altered since signing.

The awkward commitment question

"You might call this self-regulation," Horvitz told MIT Technology Review, though he was candid that the work is also about positioning. "We're also trying to be a selected, desired provider to people who want to know what's going on in the world." A company that runs Copilot, Azure, LinkedIn, and holds a major stake in OpenAI publishing a guide to AI content verification is, at minimum, a good look.

But when asked whether Microsoft would actually implement its own recommendations across those platforms, Horvitz sidestepped. "Product groups and leaders across the company were involved in this study to inform product road maps and infrastructure, and our engineering teams are taking action on the report's findings," he said in a prepared statement. That's corporate for "no promises."

This matters because Microsoft sits at the center of an enormous AI content pipeline. If the company that wrote the blueprint won't commit to following it, the odds of Meta or X voluntarily signing up seem slim.

What the forensics expert thinks

Hany Farid, a UC Berkeley professor who specializes in digital forensics and wasn't involved in the research, offered a measured endorsement. "I don't think it solves the problem, but I think it takes a nice big chunk out of it," he said. Sophisticated actors, including governments, could still bypass such tools, but the framework would make mass-scale deception harder to pull off.

Farid was less optimistic about adoption. "If the Mark Zuckerbergs and the Elon Musks of the world think that putting 'AI generated' labels on something will reduce engagement, then of course they're incentivized not to do it." An audit by Indicator last year found that only 30% of test posts on Instagram, LinkedIn, Pinterest, TikTok, and YouTube were correctly labeled as AI-generated. Platforms have promised labeling before. The follow-through has been poor.

Regulation looming, enforcement uncertain

California's AI Transparency Act takes effect in August and will require AI providers to embed visible and hidden disclosures in AI-generated content that are "permanent or extraordinarily difficult to remove." The EU AI Act mandates machine-readable AI labeling with penalties up to 3% of global revenue. Similar rules are forming in China, India, and South Korea.

Microsoft's own report warns that some of these requirements are technically impossible to meet right now. Visible watermarks can be removed by amateurs. Invisible ones can be stripped by skilled attackers. Rushing poorly functioning systems to market could undermine public trust in authentication altogether, which is the researchers' deeper worry: that a bad rollout poisons the well for any future verification effort.

Enforcement in the US faces another obstacle. President Trump's executive order from late last year aims to curtail state AI regulations deemed "burdensome" to the industry, and the administration canceled grants related to misinformation research via DOGE. Meanwhile, MIT Technology Review reported that the Department of Homeland Security itself uses Google and Adobe AI to generate video content it shares publicly.

The report also raises a concern that gets less attention: so-called reversal attacks, where someone takes a real image and modifies a few pixels with AI to make platforms flag it as synthetic. If verification tools are sloppy, authentic content gets discredited. It's a vulnerability that could be weaponized faster than the tools can be fixed.

California's law will be the first major US test. Whether it survives the current administration's posture toward AI regulation is another question entirely.