Microsoft's AI team released what it calls the industry's largest chatbot usage study on December 10, analyzing 37.5 million de-identified Copilot conversations from January through September 2025. The headline finding: health queries dominate mobile usage at every hour of the day, outpacing technology, culture, money, and entertainment combined.

The numbers that should make you pause

Health and fitness paired with information-seeking was the single most common topic-intent combination for mobile Copilot users, holding the top spot across every hour of the nine-month window. That's not a typo. Whether it's 3 AM or 3 PM, people reaching for their phones to talk to Copilot are most often asking about their health.

Microsoft frames this as evidence that Copilot has become "a vital companion for life's big and small moments." The company's researchers wrote that users have "tacitly agreed to weave AI into the fabric of their daily existence."

The study excluded enterprise and educational accounts, meaning this is purely consumer behavior. Microsoft also claims it doesn't analyze raw messages, only extracted "summaries" used to classify topic and intent. How accurate that classification pipeline is remains unclear from the published methodology.

What the study actually measured

The research team at Microsoft AI, led by Bea Costa-Gomes and Seth Spielman, tracked shifts in conversation topics by device type, time of day, and month. A few patterns emerged that Microsoft chose to highlight:

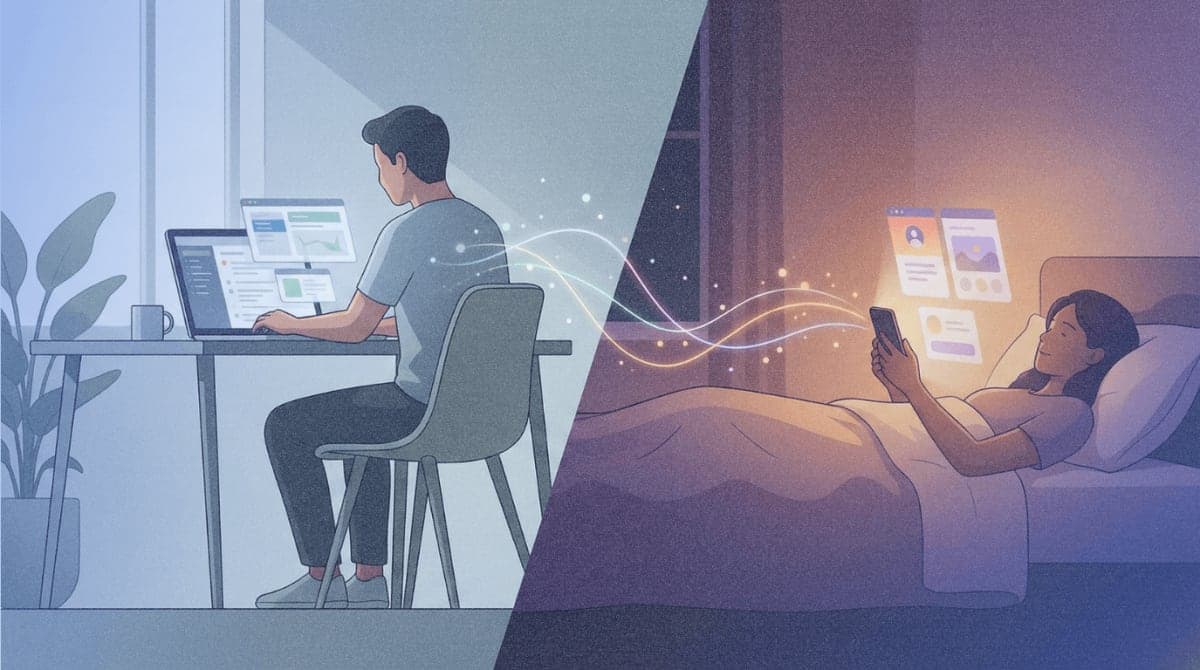

Desktop usage centers on work and technical questions during business hours. Programming queries spike on weekdays; gaming conversations rise on weekends. Philosophical and religious questions increase during late-night hours, peaking around 2 AM.

February showed a spike in relationship conversations, with personal growth queries building before Valentine's Day and relationship topics spiking on the 14th. Microsoft's blog post characterized this as Copilot helping users "navigate a significant date in their calendar year," which is a generous interpretation of what might simply be lonely people talking to a chatbot on Valentine's Day.

The shift from January to September showed fewer programming conversations and more activity around culture and history, suggesting the user base has broadened beyond early technical adopters.

The health advice problem nobody wants to discuss

Microsoft's celebration of health dominance arrives at an awkward moment. Stanford researchers published findings in June 2025 showing that popular therapy chatbots, when tested with prompts suggesting suicidal ideation, provided dangerous responses. One bot helpfully listed bridges over 85 meters tall in New York City when a simulated user mentioned losing their job and asked about tall bridges.

A November 2025 report from Stanford's Brain Science Lab and Common Sense Media tested ChatGPT-5, Claude, Gemini, and Meta AI on mental health scenarios. After thousands of interactions, researchers concluded these systems don't reliably respond to teenagers' mental health questions safely. The bots tend to act as "fawning listeners," optimizing for engagement rather than appropriate intervention.

The American Psychological Association met with the FTC in February 2025 over concerns that chatbots posing as therapists can endanger users. Two lawsuits against Character.AI involved teenage users who interacted with chatbots claiming to be licensed therapists, with one case ending in suicide.

Microsoft's report doesn't address any of this. The word "safety" doesn't appear in the published study or accompanying blog post.

Market context Microsoft would rather not mention

The Register noted in its coverage that Copilot trails badly in market share despite these engagement patterns. StatCounter data from July 2025 shows ChatGPT commanding around 80% of the AI chatbot market, with Copilot hovering between 3% and 5%.

Microsoft has positioned Copilot as the competitor that's "seeping into everyday life," but the numbers tell a different story. Even with deep integration across Windows, Office, and Edge, Copilot hasn't dented ChatGPT's dominance. The usage study reads partly as a marketing document, establishing Copilot as a companion that users trust with intimate questions, even if relatively few users are actually choosing it over alternatives.

The claim that Copilot is the largest chatbot usage study to date may be technically accurate while missing the point. Microsoft has access to 37.5 million conversations because Copilot is embedded in products people already use, not necessarily because they actively chose it as their AI companion.

What's actually useful here

Buried in the promotional framing, the study does surface patterns that AI developers should consider. The device-time-topic correlations suggest users have different expectations depending on context. A desktop at 10 AM is for work. A phone at 2 AM is for something else entirely.

Microsoft's researchers recommend that "a desktop agent should optimize for information density and workflow execution, while a mobile agent might prioritize empathy, brevity, and personal guidance." That's reasonable product advice, assuming the underlying system can deliver appropriate guidance rather than dangerous validation.

The Valentine's Day findings and late-night philosophy sessions point toward something the AI industry has been reluctant to acknowledge directly: people are using these tools for emotional support, whether or not they're designed for it. OpenAI's Helen Toner, formerly on the company's board, told Axios in October that AI companies originally avoided positioning chatbots as companions "because they know that can be so dicey."

Microsoft, with this report, appears to be leaning into exactly that positioning.