Chinese tech giant Meituan released LongCat-Image in early December, a 6 billion parameter image generation model that the company says outperforms many open-source competitors several times its size. The technical report dropped December 8, with weights available on Hugging Face and GitHub.

The model's main pitch: better data hygiene over brute-force scaling. Meituan's team filtered out AI-generated images during training and used a four-stage data pipeline spanning pre-training, mid-training, SFT, and reinforcement learning. A separate detection model penalizes the generator whenever it produces recognizable AI artifacts, pushing outputs toward textures realistic enough to fool the detector.

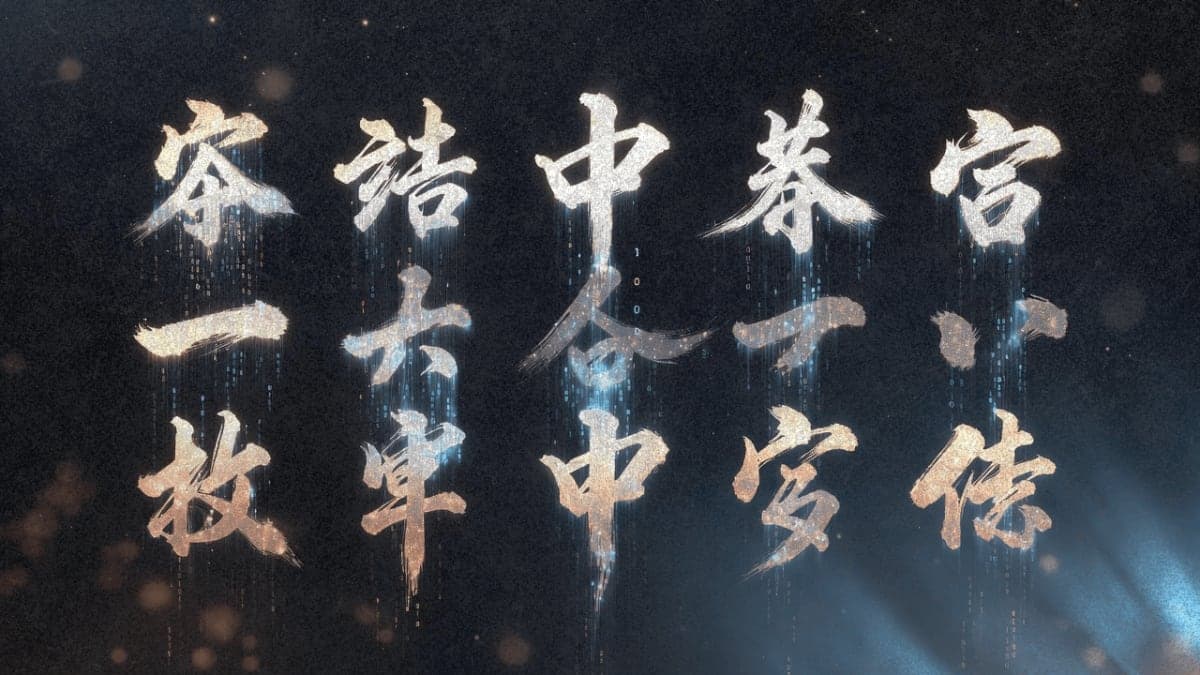

Text rendering is the headline feature. Most image models botch spelling because they treat words as abstract tokens. LongCat-Image uses a hybrid approach: Qwen2.5-VL-7B handles overall prompt comprehension, but text in quotation marks triggers a character-level tokenizer that builds words letter by letter. The result, according to Meituan's benchmarks, is 90.7% accuracy on their ChineseWord test and coverage of all 8,105 standard Chinese characters.

On T2I-CoreBench, which evaluates composition and reasoning, LongCat-Image ranked second among open-source models as of December 9, trailing only the 32B-parameter Flux2.dev. The editing variant, LongCat-Image-Edit, claims open-source SOTA on GEdit-Bench with scores of 7.60 (Chinese) and 7.64 (English). These are self-reported numbers.

Meituan is releasing everything: model weights, mid-training checkpoints, and the full training toolchain under Apache 2.0. The stated goal is building an open ecosystem rather than competing on API revenue.

The Bottom Line: LongCat-Image runs on roughly 17GB VRAM, making it accessible to researchers without enterprise hardware, though independent benchmark verification is still pending.

QUICK FACTS

- Model size: 6B parameters (vs. 20B+ for typical competitors)

- Chinese text accuracy: 90.7% on ChineseWord benchmark (company-reported)

- T2I-CoreBench rank: 2nd among open-source models as of December 9, 2025

- VRAM requirement: ~17GB with CPU offload

- License: Apache 2.0

- Release date: December 5, 2025 (weights); December 8, 2025 (technical report)