Higgsfield AI has rolled out Cinema Studio, a production environment that treats AI video generation more like a film set than a text prompt box. The San Francisco-based company, which raised $50 million in Series A funding earlier this year, is pitching it as a tool for creators who want repeatable, predictable results rather than generation roulette.

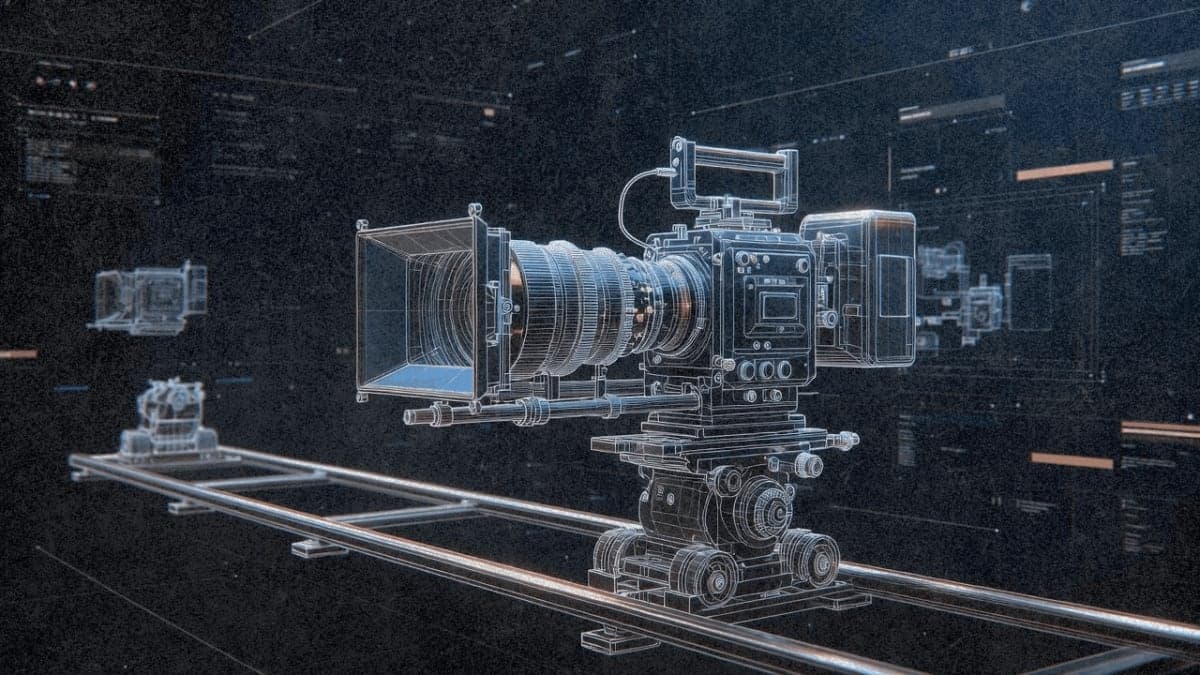

The core feature is what Higgsfield calls "optical physics" simulation. Users select virtual camera bodies that emulate sensors from manufacturers like ARRI and RED, then pair them with lens types including anamorphic and macro glass. The company claims this produces consistent visual characteristics across generations, though independent testing hasn't verified these claims. Output defaults to 21:9 CinemaScope aspect ratio, with upscaling available to 4K and beyond.

Camera motion gets stacked in layers. The system allows up to three simultaneous movements per shot, and users can set start and end keyframes to control how clips animate. A "Reference Anchor" feature locks character appearance across shots by using an approved still image as the generation seed.

The workflow mirrors traditional pre-production: write a scene description, configure virtual gear, generate preview stills, select a hero frame, then animate. Higgsfield positions this as moving away from what the company's website calls "random interpretation of prompts." Whether that determinism holds in practice remains to be seen. The feature is live now at higgsfield.ai/cinema-studio.

The Bottom Line: Higgsfield is betting that filmmakers want camera menus, not just text boxes, and Cinema Studio is its answer.

QUICK FACTS

- Camera emulation includes ARRI Alexa 35, RED, and Panavision sensor profiles (company-reported)

- Default output: 21:9 aspect ratio with upscaling to 4K/8K

- Up to 3 simultaneous camera movements per shot

- 8-step workflow from script to final video

- Reference Anchor system for character consistency across shots