A mathematician gave an unreleased AI model a problem he'd been grinding on with his graduate student. Five minutes later, it spit out an explicit formula that improved on his own published result. Not just marginally. A logarithmic factor better.

Paata Ivanisvili, a professor at UC Irvine who specializes in harmonic analysis and probability, shared the result on X yesterday. He'd been given early access to Grok 4.20, xAI's internal beta that Elon Musk mentioned on X is coming in a few weeks. The problem involves something called Bellman functions, which, okay, requires some explaining.

What the AI actually found

The technical setup: Ivanisvili and his student Natanael Alpay had been working on lower bounds for dyadic square functions applied to indicator functions of sets. In their February paper, they proved a bound involving the Gaussian isoperimetric profile, written as I(p), which behaves like p√(log(1/p)) as p approaches zero.

Grok 4.20 produced something different. It gave an explicit formula: U(p,q) = E√(q² + τ), where τ is the exit time of Brownian motion from the interval (0,1) starting at p. At the boundary case U(p,0), this yields E√τ, which behaves like p log(1/p) near zero.

That's a square root improvement in the logarithmic factor. The bound is also sharp, which means you can't do better.

The previous best known lower bound came from Burkholder, Davis, and Gundy's work on martingale inequalities: |A|(1-|A|). Ivanisvili and Alpay improved that to |A|(1-|A|)√(log(1/(|A|(1-|A|)))). Grok pushed it further to |A|(1-|A|)log(1/(|A|(1-|A|))). And again, sharp.

Why Bellman functions are useful here

Bellman functions come from stochastic optimal control theory. You have an optimization problem over an infinite-dimensional space of possible functions, and Bellman's trick reduces it to solving a finite-dimensional nonlinear PDE. Alexander Volberg at Michigan State, who was Ivanisvili's PhD advisor, literally wrote the book on this for harmonic analysis applications.

The connection to probability is that you can think of functions as martingales. The Bellman function then encodes information about the optimum and optimizers. It's been productive for estimates of singular integrals, because of how probability structures lurk underneath classical operators.

Finding the right Bellman function for a given problem is hard. You're essentially guessing the solution to a nonlinear PDE that captures the extremal behavior. The fact that Grok produced an explicit formula involving Brownian motion exit times suggests it found a natural probabilistic interpretation that wasn't obvious from the analytic formulation.

The Takagi connection is strange

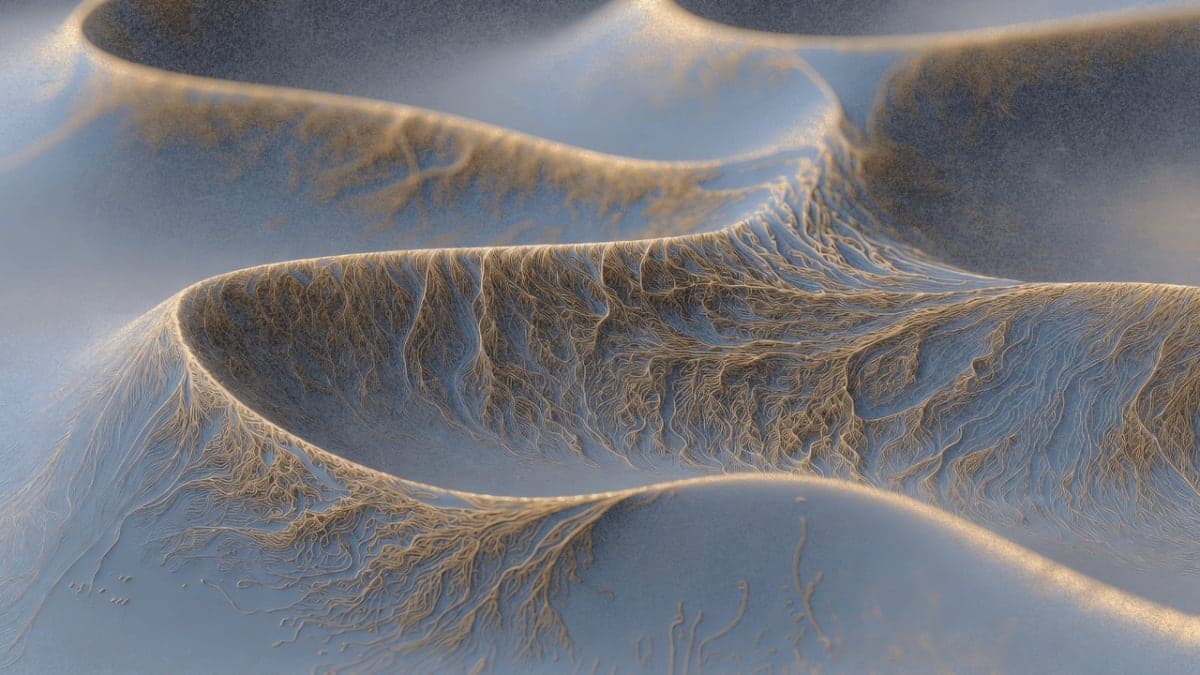

Ivanisvili had previously posted about how the sharp lower bound for a related problem, the S₁ square function, turns out to coincide with the Takagi function. This is a fractal curve from 1903, continuous everywhere but differentiable nowhere. Its graph looks like a jagged mountain range.

What surprised him was learning that the Takagi function has a connection to the Riemann hypothesis. There's a 2000 result by Kanemitsu and Yoshimoto showing that the Riemann hypothesis is equivalent to a statement about how the Takagi function behaves on Farey fractions.

The new Grok result gives a different function, smooth rather than fractal, in the same family of isoperimetric-type profiles. The Gaussian isoperimetric profile it's related to has its own deep story in probability and geometry.

Does it matter?

Ivanisvili is appropriately modest. The result won't change anything tomorrow. It's a step toward understanding how small the quadratic variation of Boolean functions can be, specifically when you test square functions against indicator functions of sets.

Square functions aren't bounded in L¹. That's known. The question here was more about precision: how exactly does the blowup behave on the simplest possible test cases?

But the meta-point is more interesting. A mathematician handed an AI a problem from his own research area, and the AI produced a result that improves on published work. In five minutes. That's not supposed to happen yet.

Is the result verified?

This is where I'd normally be skeptical. An AI producing math formulas that look right but are subtly wrong is extremely common. But Ivanisvili is a serious researcher, a recent Simons Fellow who works exactly in this area of Bellman functions and harmonic analysis. He's not posting this casually.

Whether the result has been fully verified, with all edge cases checked and a complete proof written out, isn't clear from the thread. The formula itself has a clean probabilistic interpretation, which is a good sign. Exit times of Brownian motion are well-studied objects with known distributions.

I couldn't independently check the asymptotics. Someone with more expertise in martingale theory should weigh in.

Grok 4.20 context

The model isn't publicly available. Grok 4.1 launched in November 2025 and currently sits at 1483 Elo on LMArena's thinking mode leaderboard. Grok 4.20 was reportedly tested in xAI's "Alpha Arena" trading competition in late 2025, where it won with 12.11% aggregate returns against other AI models.

Musk said Grok 4.20 is 3-4 weeks out. Grok 5, with rumored 6 trillion parameters, is supposedly slated for Q1 2026.

The timing of Ivanisvili's post, right as xAI is building hype for the next release, is probably not coincidental. That doesn't make the mathematical result less interesting.