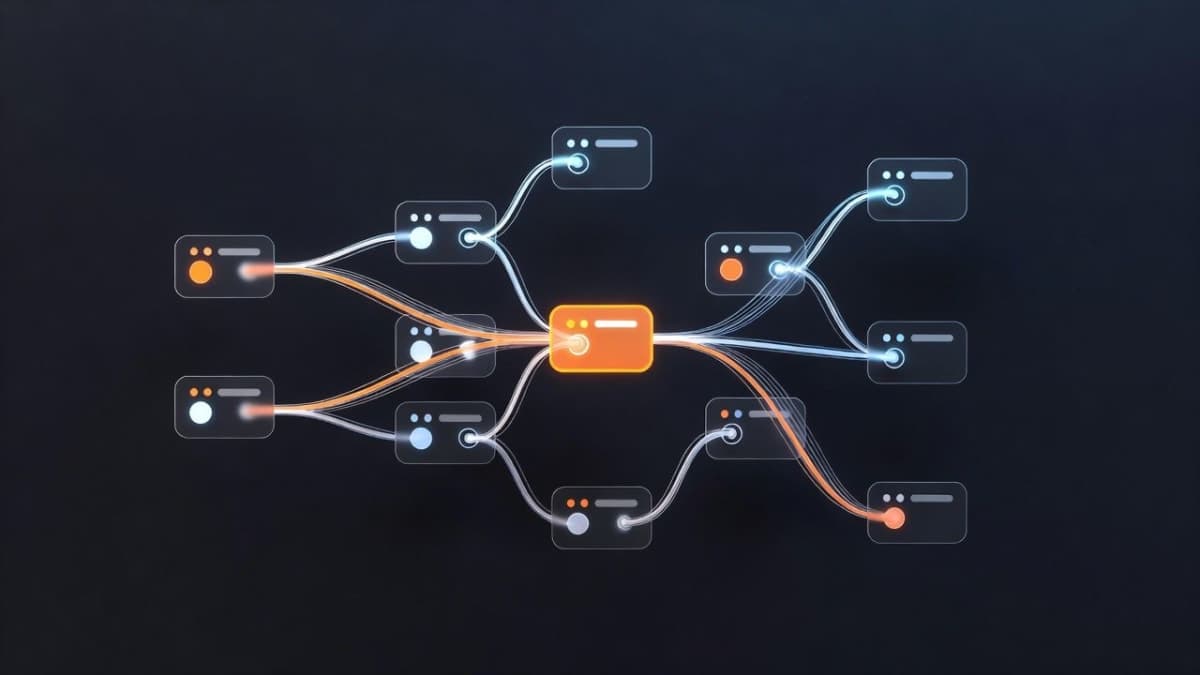

The Gradio team at Hugging Face released Daggr, an open-source Python library for chaining ML models, Gradio apps, and custom functions into visual workflows. You write the logic in Python, Daggr builds the graph.

The pitch is hot debugging. When step 7 of a 10-step pipeline breaks, you can inspect that node's output, tweak inputs, and rerun just that piece without restarting from scratch. The team positions this as a middle ground between fragile scripts and heavyweight orchestration platforms like Airflow. "Daggr is built for interactive AI/ML workflows with real-time visual feedback," the blog post explains, "making it ideal for prototyping, demos, and workflows where you want to inspect intermediate outputs."

The library supports three node types: GradioNode for calling Spaces APIs (with optional local cloning), FnNode for custom Python functions, and InferenceNode for Hugging Face Inference Providers. State persists across sessions. Workflows deploy to Hugging Face Spaces by adding daggr to requirements.txt.

ComfyUI comparisons are inevitable, and the team addresses them directly. Where ComfyUI is a visual editor for dragging and connecting nodes, Daggr takes a code-first approach. The visual canvas is generated automatically from Python, which means version control and standard dev tools work normally.

The project is in beta. APIs may change between versions and data loss is possible during updates.

The Bottom Line: Daggr offers a code-first alternative to visual workflow editors, with built-in debugging that lets you fix broken pipeline steps without re-running everything.

QUICK FACTS

- Released: January 29, 2026

- License: MIT

- Requires: Python 3.10+

- Current version: 0.4.3 (per blog examples)

- Status: Beta (APIs may change)