Google shipped T5Gemma 2 on December 18, 2025, upgrading its encoder-decoder model family with image understanding and dramatically longer context windows. The models build on Gemma 3 rather than Gemma 2, which powered the original T5Gemma release earlier this year.

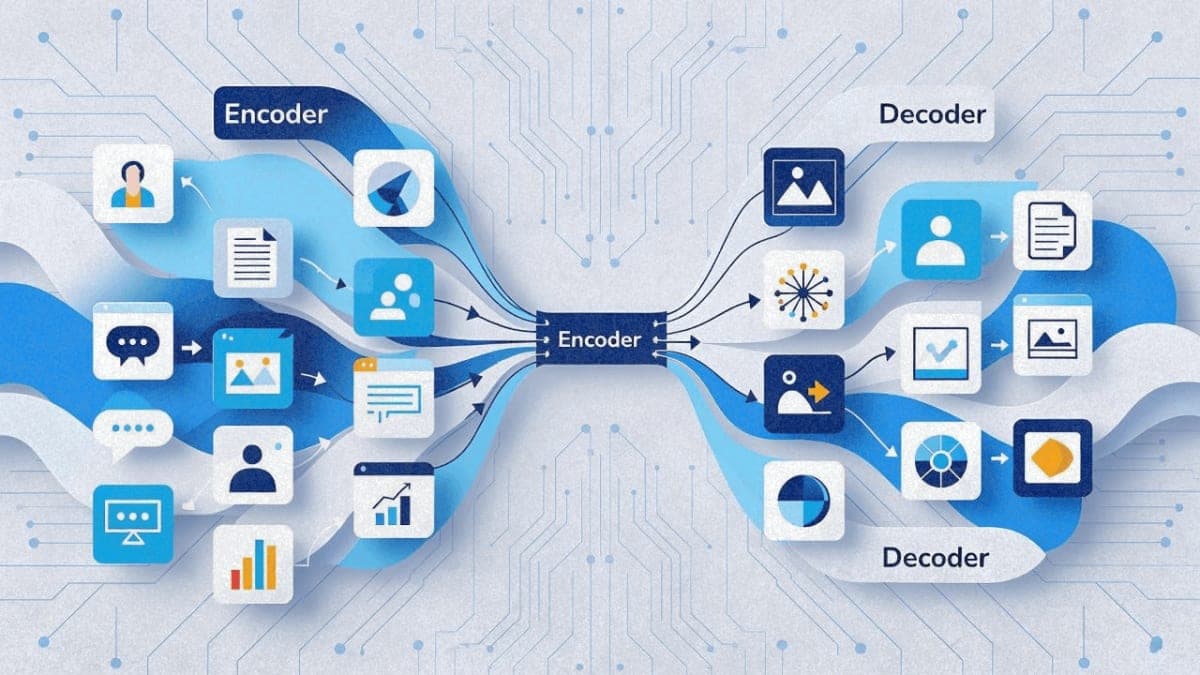

The engineering changes target memory efficiency. Google tied word embeddings between the encoder and decoder, and merged what were previously separate self-attention and cross-attention mechanisms into a single layer. Three sizes are available: 270M-270M (roughly 370M parameters total), 1B-1B (about 1.7B), and 4B-4B (around 7B). The vision encoder adds parameters on top.

On benchmarks, Google claims T5Gemma 2 beats base Gemma 3 on multimodal tasks, long-context processing, and coding. These are internal evaluations, not independent tests. The company attributes the long-context gains to separating input processing into a dedicated encoder.

The pretrained checkpoints are available now on Hugging Face, Kaggle, and Vertex AI. Google is not releasing instruction-tuned versions. Developers will need to fine-tune for specific applications.

The Bottom Line: T5Gemma 2 brings vision and 128K context to encoder-decoder architecture, but remains a research release requiring post-training before deployment.

QUICK FACTS

- Model sizes: 270M-270M, 1B-1B, 4B-4B (encoder-decoder pairs)

- Context window: up to 128K tokens

- Language support: 140+ languages (company-reported)

- Availability: Hugging Face, Kaggle, Vertex AI, Colab

- Release date: December 18, 2025

- Status: Pretrained checkpoints only, no instruction-tuned versions