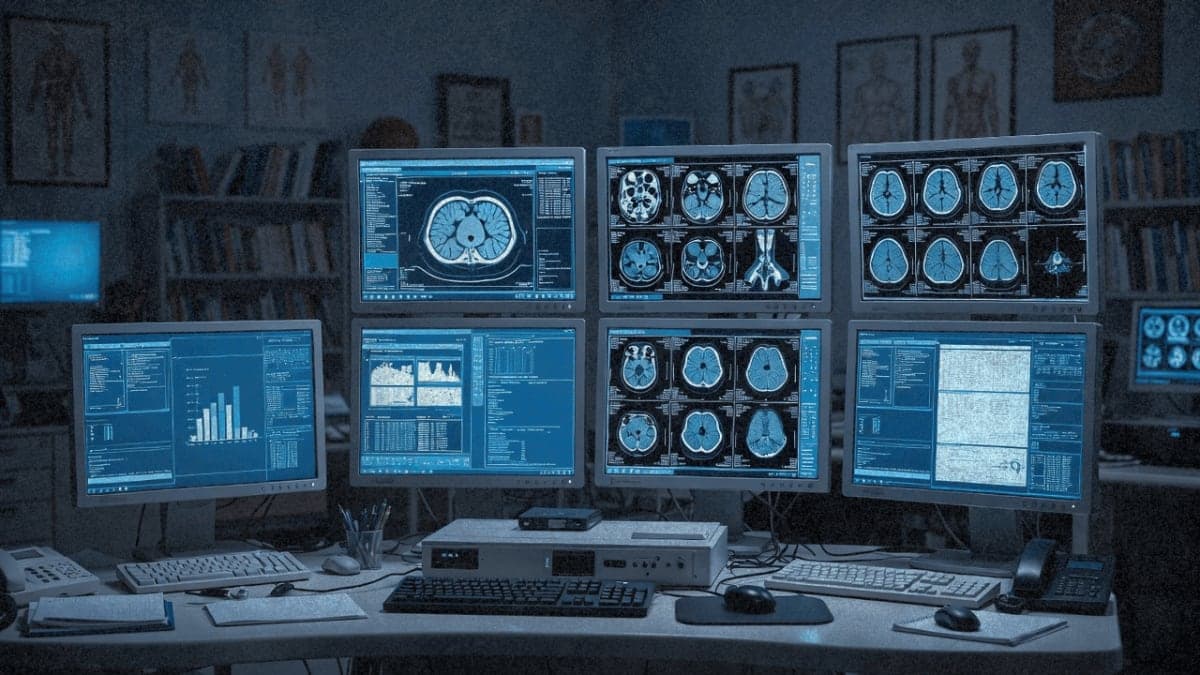

Google Research dropped MedGemma 1.5 on January 13th, and the headline number is this: it's the first open multimodal model that can interpret 3D medical imaging. CT scans, MRIs, whole-slide histopathology. Feed it multiple slices and it'll tell you what it sees.

That's new. The original MedGemma, released at Google I/O back in May 2025, could handle 2D stuff fine. Chest X-rays, dermatology photos, fundus images. But a full CT volume? No.

So how much better is it actually

Google's internal benchmarks show a 3% absolute accuracy improvement on CT disease classification (61% vs 58%) and 14% on MRI findings (65% vs 51%). Not huge, but the baseline wasn't zero. On histopathology, the jump is more dramatic: ROUGE-L scores went from 0.02 to 0.49, matching a task-specific model called PolyPath.

The chest X-ray improvements are easier to parse. Anatomical localization improved by 35% intersection-over-union on the Chest ImaGenome benchmark. Longitudinal imaging, meaning comparing current scans to historical ones, improved 5% on MS-CXR-T.

But here's where I get skeptical. These are all internal benchmarks or academic datasets. The model card itself says evaluations included "primarily English language prompts." And the 61% accuracy on CT classification? That's across findings, averaged. Which findings? Conditions? Severity? They don't break it down.

The speech thing

Alongside MedGemma 1.5, Google released MedASR, a speech-to-text model fine-tuned for medical dictation. The pitch: doctors speak in jargon, general ASR models choke on it, MedASR doesn't.

The numbers here are actually impressive. Compared to Whisper large-v3, MedASR shows 58% fewer errors on chest X-ray dictations (5.2% vs 12.5% word error rate) and 82% fewer errors on a diverse medical dictation benchmark (5.2% vs 28.2% WER).

That second number is the one that matters. 28.2% WER means roughly one in four words is wrong. For medical transcription, that's dangerous. Getting it down to 5.2% puts it in usable territory.

The model pairs with MedGemma, so you can dictate prompts instead of typing them. Whether anyone actually wants to talk to their radiology AI is another question.

They're dangling $100,000

Google launched the MedGemma Impact Challenge on Kaggle, a hackathon with $100k in prizes. Standard developer evangelism, but it suggests they want adoption numbers to show for this.

Real deployments, apparently

Two case studies worth noting. Malaysia's Ministry of Health is using a MedGemma-powered tool called askCPG from Qmed Asia to navigate 150+ clinical practice guidelines. And Taiwan's National Health Insurance Administration used MedGemma to analyze 30,000 pathology reports for lung cancer surgical decisions.

The Taiwan deployment is the more interesting one. That's policy-level analysis, not clinical point-of-care. Using an AI model to inform surgical guidelines is a different risk profile than using it to diagnose individual patients.

What's still missing

The 1.5 release is only the 4B model. If you want more parameters, you're stuck on MedGemma 1 27B for text-heavy applications. No multimodal 27B with the new capabilities yet.

Multi-turn conversations still aren't supported. Multi-image inputs beyond the longitudinal chest X-ray use case remain unvalidated. And Google keeps repeating that outputs "require independent verification and clinical correlation." Translation: don't sue us.

The model ran into a bug last July where a missing end-of-image token was quietly degrading multimodal performance. They fixed it. Makes you wonder what else might be lurking.

The real test

MedGemma has millions of downloads and hundreds of community variants on Hugging Face. Academic papers cite it favorably against comparable models. But the gap between "performs well on benchmarks" and "changes clinical outcomes" remains wide.

The 4B size is small enough to run offline, which matters for privacy-sensitive healthcare environments. And the open weights mean institutions can fine-tune on their own data without sending PHI through Google's cloud.

Whether radiologists want AI reading their CT scans at 61% accuracy. That's a different conversation.