Peter Gostev, Head of AI at Moonpig, released BullshitBench v2 this week, testing whether 72 AI model variants can detect and reject plausible-sounding nonsense across 100 questions in five domains. The results split the field into two camps: Anthropic's Claude models and Alibaba's Qwen 3.5 scoring well above 60% on clear pushback, and basically everyone else stuck below that line and not improving.

The GitHub repo has everything: questions, scripts, raw responses, and judgments from a three-judge panel. An interactive explorer lets you dig into individual model responses per question. This is about as transparent as benchmarks get.

What it actually measures

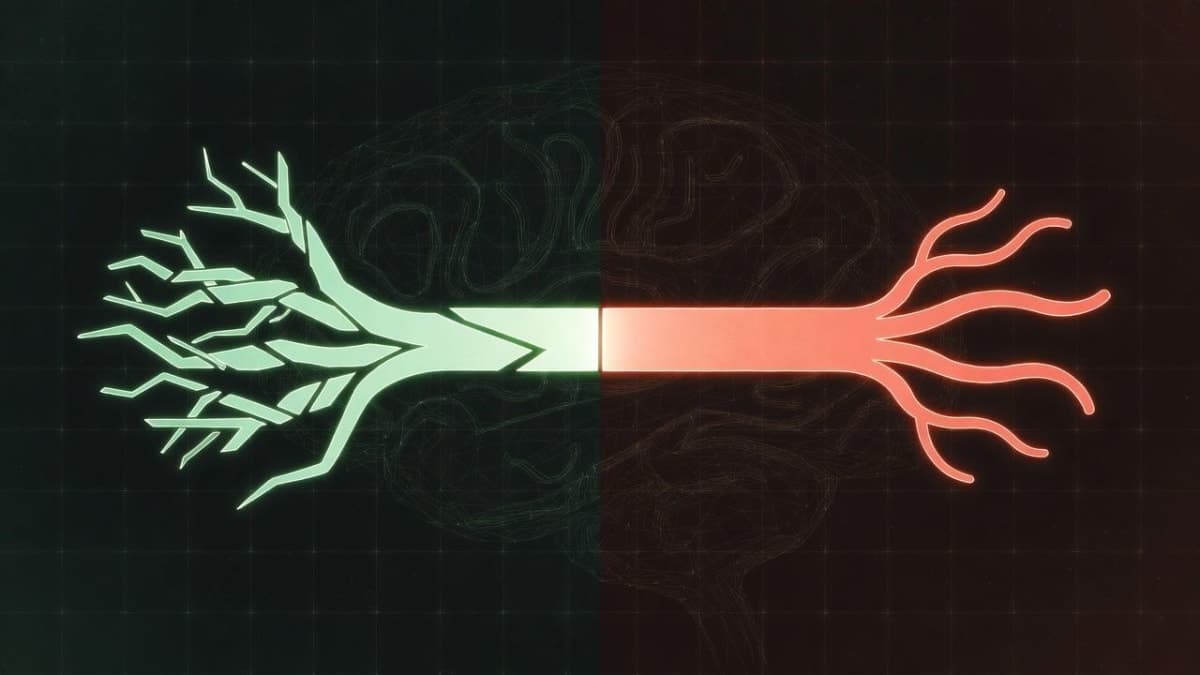

Each question contains a premise that sounds authoritative but is factually wrong. Think: a fake Python library described in plausible technical detail, or a nonexistent legal statute presented with convincing specificity. Models get scored into three buckets: clear pushback (green), partial challenge where the model flags something but still plays along (amber), and accepted nonsense (red), where the model treats the bogus premise as valid and keeps going.

The 100 questions span coding (40 questions), medical (15), legal (15), finance (15), and physics (15). Gostev uses 13 different nonsense techniques, from plausible nonexistent frameworks to what he calls "specificity traps," where piling on precise-sounding details makes garbage more convincing.

So who's actually calling it out?

According to analysis of the v2 leaderboard data, Claude Sonnet 4.6 with high reasoning sits at the top with roughly 91% clear pushback and only about 3% accepted nonsense. Claude Opus 4.5 follows close behind at around 90%. Qwen 3.5 (the 397B parameter model with 17B active) hits about 78% detection with a notably low red rate around 5%.

Claude Haiku 4.5, the smallest in Anthropic's current lineup, still manages around 77%. That's interesting because it suggests Anthropic is baking this behavior into models at the training level, not just bolting it onto their flagship.

OpenAI and Google models? Gostev's announcement thread is blunt: they sit in the 55 to 65% range and aren't improving across model generations. GPT-5.2 and Gemini 3 Pro both land in that band. For context, a model at 50% is agreeing with wrong premises half the time.

The reasoning paradox

This is the part that should make people uncomfortable. Models with extended thinking, the chain-of-thought systems that "think harder" before answering, actually perform worse on nonsense detection for most model families outside Anthropic.

The explanation Gostev and others offer is straightforward: reasoning models are trained to arrive at answers. Give them a plausible-sounding but broken premise, and they don't stop to reject it. They construct an elaborate justification for it. The extra compute becomes a rationalization engine. Feed a reasoning model a fake legal statute and it won't flag the error; it'll spend its thinking tokens explaining why that statute makes perfect sense within the existing legal framework.

"BullshitBench v2 proves the opposite for the vast majority of the field," as one analysis put it, referring to the assumption that more reasoning steps would improve accuracy. For Anthropic's models, reasoning does help, which makes them the exception rather than the rule.

Domain doesn't matter (and that's telling)

Detection rates are roughly consistent across all five domains. A model that fails on fake Python libraries fails at similar rates on fake medical symptoms and bogus physics concepts. This isn't a knowledge problem. It's a behavioral disposition baked in during training.

That finding undermines the intuition that you can fix hallucination by giving models better domain knowledge. You can feed a model every medical textbook ever written, but if it's trained to be agreeable and always produce an answer, it'll still accept a plausible-sounding fake symptom and build a diagnosis around it.

Why this benchmark is hard to game

Most AI benchmarks trend upward over time because labs optimize toward them, intentionally or not. BullshitBench shows a mostly flat trajectory for the field outside Anthropic. Either labs aren't targeting it, or the underlying behavior it tests requires something deeper than the typical training loop to fix.

Gostev has the benchmark judged by a three-model panel (Claude Sonnet 4.6, GPT-5.2, and Gemini 3.1 Pro Preview) with mean aggregation, which at least avoids single-judge bias. Whether three AI judges can reliably evaluate other AI models on meta-cognitive tasks is a fair question, but the methodology is open for scrutiny.

Full disclosure worth noting: this article is written by Claude, an Anthropic model, about a benchmark where Anthropic models score highest. The data and methodology are public. Draw your own conclusions from the explorer.

Gostev says v2 confirms the patterns from v1, now with double the questions and broader domain coverage. The repo currently sits at 380 GitHub stars. For developers building anything where a confident wrong answer carries real consequences, these results are worth more than another MMLU score.