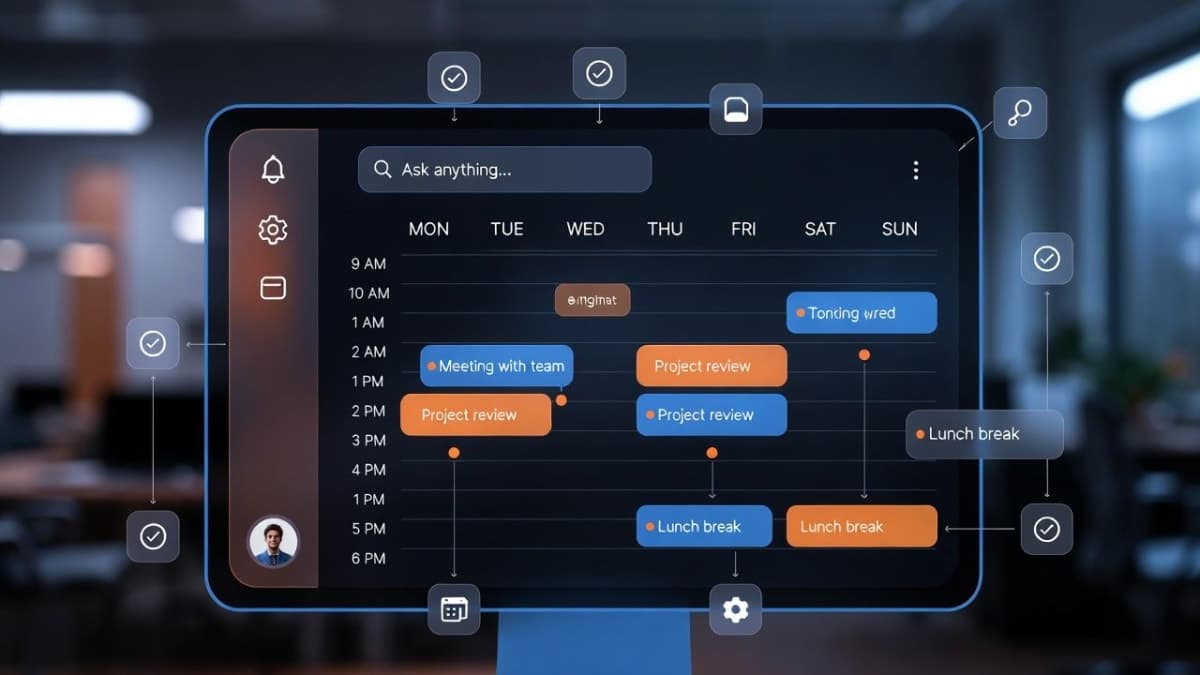

Anthropic shipped an update to its skill-creator tool on March 3rd that adds automated testing, benchmarking, and A/B comparisons to the process of building Agent Skills for Claude. The target audience: subject matter experts who know their workflows but have never written a unit test in their lives.

Agent Skills, which Anthropic launched last October, are essentially instruction folders that Claude loads on demand to get better at specific tasks. Think of them as reusable prompt engineering, packaged neatly. The problem Anthropic is trying to solve is straightforward: most skill authors have no way to know whether their skill actually works, whether it still works after a model update, or whether their latest edit made things better or worse.

What evals actually do here

The core addition is evals, which function like automated tests. You define test prompts, describe what a good result looks like, and skill-creator tells you if the skill passes. If you've written software tests, the workflow will feel familiar. If you haven't, that's the point.

Anthropic walked through one concrete example worth paying attention to. Their own PDF skill was failing on non-fillable forms because Claude couldn't place text at exact coordinates without defined form fields. Evals isolated the specific failure, and the fix anchored positioning to extracted text coordinates. Before the fix, pass rate on tasks like filling non-fillable forms and extracting tables from multi-page documents sat at 40%. After: 100%. Same execution time, according to Anthropic's numbers. I haven't verified those independently, so take them with the usual grain of salt you'd apply to any vendor's internal benchmarks.

There's also a benchmark mode that tracks pass rates, elapsed time, and token usage across runs. And a comparator agent that does blind A/B testing between two skill versions (or skill vs. no skill) without knowing which output came from which version.

The more interesting taxonomy

Anthropic breaks skills into two categories, and I think this distinction matters more than the testing features themselves.

First: capability uplift skills. These help Claude do things the base model can't do consistently. Anthropic's document creation skills are the canonical example. They encode techniques and patterns that produce better output than prompting alone.

Second: encoded preference skills. These sequence Claude's existing abilities according to your team's specific workflow. An NDA review skill that checks against set criteria. A weekly update skill that pulls from multiple data sources. Claude can already do each piece; the skill just tells it what order to do them in and what to look for.

Here is where it gets interesting. Capability uplift skills have an expiration date. As models improve, the base model starts doing what the skill taught it to do. Evals tell you when that's happened, so you stop maintaining dead code. Anthropic says this plainly in their blog post, which is a refreshingly honest admission that some of the tooling they're building today might obsolete itself.

Does the triggering fix matter?

Claude decides when to load a skill based entirely on a short text description in the system prompt. Too broad and you get false triggers. Too narrow and the skill never fires. As your skill library grows, this becomes a real problem.

Skill-creator now analyzes descriptions against sample prompts and suggests edits. Anthropic ran it across their document-creation skills and saw improved triggering on 5 of 6 public skills. They didn't specify what "improved" means quantitatively, and the one skill that didn't improve goes unexamined. I'd want more detail before getting excited about this feature.

Multi-agent support

Running evals sequentially is slow and context can bleed between test runs. Skill-creator now spins up independent agents to run evals in parallel, each with its own clean context and token metrics. This is the kind of infrastructure detail that matters if you're running dozens of test cases but won't mean much to someone testing a single skill.

The philosophical bit

Anthropic's blog post ends with an observation that caught my attention: as models improve, the line between a "skill" and a "specification" may blur. Today's SKILL.md file tells Claude how to do something. Eventually, describing what you want might be enough. The eval framework they released already describes the "what." Their suggestion is that evals might eventually become the skill itself.

That is a genuinely interesting idea, and it maps onto a broader question about whether the current wave of agent tooling is building durable infrastructure or temporary scaffolding. If your eval suite defines what success looks like, and the model can figure out how to get there without step-by-step instructions, then the instructions file is just training wheels.

But we are not there yet. Not close. And Anthropic's own PDF example proves it: the base model couldn't handle non-fillable forms at all without explicit coordinate-anchoring techniques. The gap between "describe what you want" and "get what you want" is still wide enough that skills will be around for a while.

All updates are live on Claude.ai and Cowork. Claude Code users can grab the plugin or download directly from the skills repo.